一、Master节点初始化¶

1.Master01节点创建kubeadm-config.yaml配置文件如下

$ vim kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1beta2

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: 7t2weq.bjbawausm0jaxury

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 192.168.1.31 #Master01节点的IP地址

bindPort: 6443

nodeRegistration:

criSocket: /run/containerd/containerd.sock

name: k8s-master01

taints:

- effect: NoSchedule

key: node-role.kubernetes.io/master

---

apiServer:

certSANs:

- 192.168.1.38 #VIP地址/公有云的负载均衡地址

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta2

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controlPlaneEndpoint: 192.168.1.38:16443

controllerManager: {}

dns:

type: CoreDNS

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers

kind: ClusterConfiguration

kubernetesVersion: v1.23.17 #此处版本号和kubeadm版本一致

networking:

dnsDomain: cluster.local

podSubnet: 172.16.0.0/12

serviceSubnet: 10.0.0.0/16

scheduler: {}

2.Master01节点上更新kubeadm文件

$ kubeadm config migrate --old-config kubeadm-config.yaml --new-config new.yaml

3.在Master01节点上将new.yaml文件复制到其他master节点

$ for i in k8s-master02 k8s-master03; do scp new.yaml $i:/root/; done

4.所有Master节点提前下载镜像,可以节省初始化时间(其他节点不需要更改任何配置,包括IP地址也不需要更改)

$ kubeadm config images pull --config /root/new.yaml

5.所有节点设置开机自启动kubelet

$ systemctl enable --now kubelet

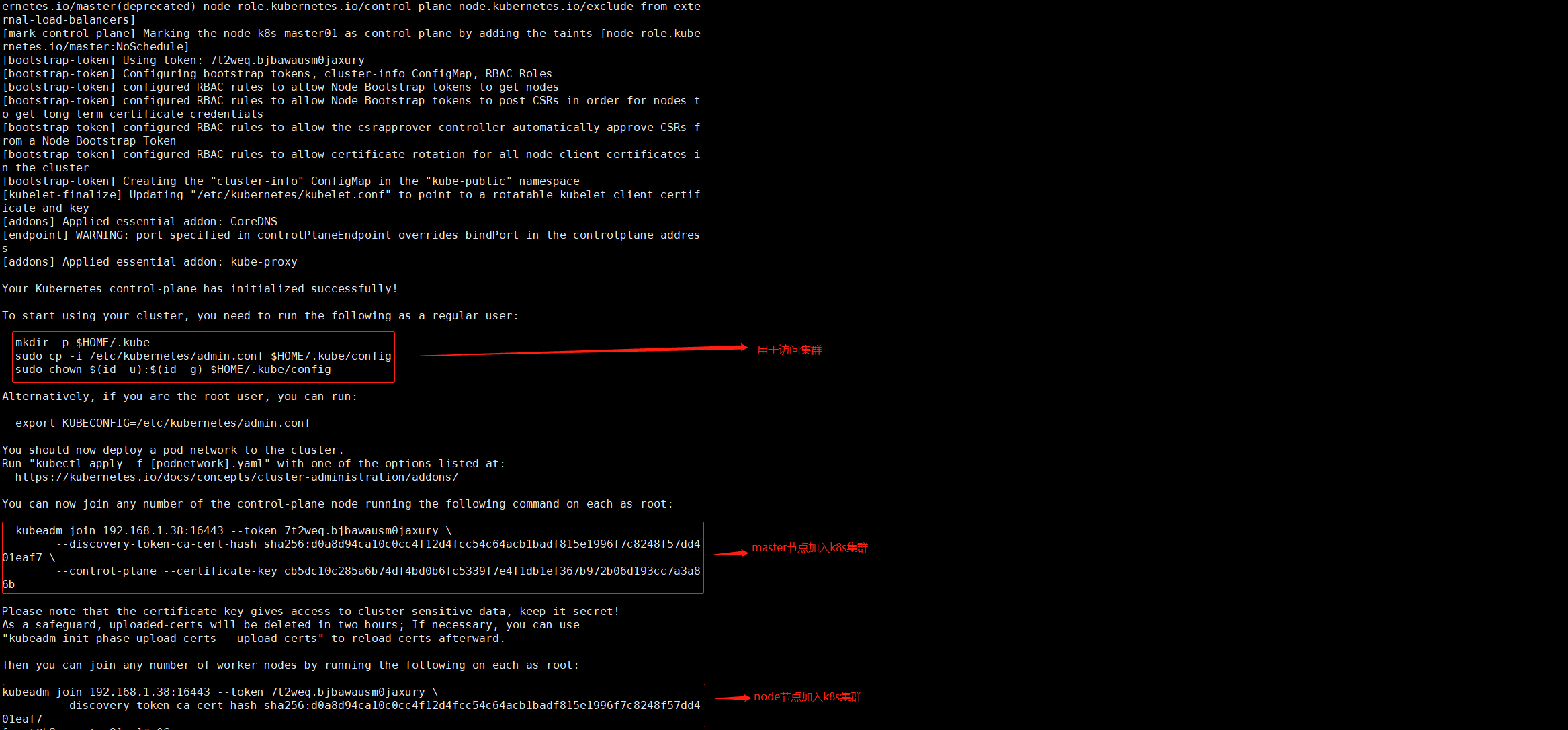

6.Master01节点初始化,初始化以后会在/etc/kubernetes目录下生成对应的证书和配置文件,之后其他Master节点加入Master01即可

$ kubeadm init --config /root/new.yaml --upload-certs

补充:

如果初始化失败,重置后再次初始化,命令如下(没有失败不要执行)

$ kubeadm reset -f ; ipvsadm --clear ; rm -rf ~/.kube

7.Master01节点配置环境变量,用于访问Kubernetes集群

$ mkdir -p $HOME/.kube

$ sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

$ sudo chown $(id -u):$(id -g) $HOME/.kube/config

#查看节点状态

$ kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master01 NotReady control-plane,master 4m5s v1.23.17

二、添加Master和Node到k8s集群¶

1.添加Master02节点和Master03节点到k8s集群

$ kubeadm join 192.168.1.38:16443 --token 7t2weq.bjbawausm0jaxury \

--discovery-token-ca-cert-hash sha256:d0a8d94ca10c0cc4f12d4fcc54c64acb1badf815e1996f7c8248f57dd401eaf7 \

--control-plane --certificate-key cb5dc10c285a6b74df4bd0b6fc5339f7e4f1db1ef367b972b06d193cc7a3a86b

2.添加Node01节点和Node02节点到k8s集群

$ kubeadm join 192.168.1.38:16443 --token 7t2weq.bjbawausm0jaxury \

--discovery-token-ca-cert-hash sha256:d0a8d94ca10c0cc4f12d4fcc54c64acb1badf815e1996f7c8248f57dd401eaf7

3.在Master01节点上查看节点状态

$ kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master01 NotReady control-plane,master 7m11s v1.23.17

k8s-master02 NotReady control-plane,master 2m28s v1.23.17

k8s-master03 NotReady control-plane,master 102s v1.23.17

k8s-node01 NotReady <none> 106s v1.23.17

k8s-node02 NotReady <none> 84s v1.23.17

三、Calico组件安装¶

1.在Master01节点上进入相应分支目录

$ cd /root/k8s-ha-install && git checkout manual-installation-v1.23.x && cd calico/

2.提取Pod网段并赋值给变量

$ POD_SUBNET=`cat /etc/kubernetes/manifests/kube-controller-manager.yaml | grep cluster-cidr= | awk -F= '{print $NF}'`

3.修改calico.yaml文件

$ sed -i "s#POD_CIDR#${POD_SUBNET}#g" calico.yaml

4.安装Calico

$ kubectl apply -f calico.yaml

5.查看节点状态

$ kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master01 Ready control-plane,master 9h v1.23.17

k8s-master02 Ready control-plane,master 9h v1.23.17

k8s-master03 Ready control-plane,master 9h v1.23.17

k8s-node01 Ready <none> 9h v1.23.17

k8s-node02 Ready <none> 9h v1.23.17

6.查看pod状态,观察到所有pod都是running

$ kubectl get po -n kube-system

NAME READY STATUS RESTARTS AGE

calico-kube-controllers-6f6595874c-tntnr 1/1 Running 0 8m52s

calico-node-5mj9g 1/1 Running 1 (41s ago) 8m52s

calico-node-hhjrv 1/1 Running 2 (61s ago) 8m52s

calico-node-szjm7 1/1 Running 0 8m52s

calico-node-xcgwq 1/1 Running 0 8m52s

calico-node-ztbkj 1/1 Running 1 (11s ago) 8m52s

calico-typha-6b6cf8cbdf-8qj8z 1/1 Running 0 8m52s

coredns-65c54cc984-nrhlg 1/1 Running 0 9h

coredns-65c54cc984-xkx7w 1/1 Running 0 9h

etcd-k8s-master01 1/1 Running 1 (29m ago) 9h

etcd-k8s-master02 1/1 Running 1 (29m ago) 9h

etcd-k8s-master03 1/1 Running 1 (29m ago) 9h

kube-apiserver-k8s-master01 1/1 Running 1 (29m ago) 9h

kube-apiserver-k8s-master02 1/1 Running 1 (29m ago) 9h

kube-apiserver-k8s-master03 1/1 Running 2 (29m ago) 9h

kube-controller-manager-k8s-master01 1/1 Running 2 (29m ago) 9h

kube-controller-manager-k8s-master02 1/1 Running 1 (29m ago) 9h

kube-controller-manager-k8s-master03 1/1 Running 1 (29m ago) 9h

kube-proxy-7rmrs 1/1 Running 1 (29m ago) 9h

kube-proxy-bmqhr 1/1 Running 1 (29m ago) 9h

kube-proxy-l9rqg 1/1 Running 1 (29m ago) 9h

kube-proxy-nn465 1/1 Running 1 (29m ago) 9h

kube-proxy-sghfb 1/1 Running 1 (29m ago) 9h

kube-scheduler-k8s-master01 1/1 Running 2 (29m ago) 9h

kube-scheduler-k8s-master02 1/1 Running 1 (29m ago) 9h

kube-scheduler-k8s-master03 1/1 Running 1 (29m ago) 9h

四、Metrics部署¶

在新版的Kubernetes中系统资源的采集均使用Metrics-server,可以通过Metrics采集节点和Pod的内存、磁盘、CPU和网络的使用率。

1.将Master01节点的front-proxy-ca.crt复制到Node-01节点和Node-02节点

$ scp /etc/kubernetes/pki/front-proxy-ca.crt k8s-node01:/etc/kubernetes/pki/front-proxy-ca.crt

$ scp /etc/kubernetes/pki/front-proxy-ca.crt k8s-node02:/etc/kubernetes/pki/front-proxy-ca.crt

2.在Master01节点上操作安装metrics server

$ cd /root/k8s-ha-install/kubeadm-metrics-server

$ kubectl create -f comp.yaml

3.在Master01节点上查看metrics-server部署情况

$ kubectl get po -n kube-system -l k8s-app=metrics-server

NAME READY STATUS RESTARTS AGE

metrics-server-5cf8885b66-jdjtb 1/1 Running 0 115s

4.在Master01节点上查看node使用情况

$ kubectl top node

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

k8s-master01 130m 0% 1019Mi 12%

k8s-master02 102m 0% 1064Mi 13%

k8s-master03 93m 0% 971Mi 12%

k8s-node01 45m 0% 541Mi 6%

k8s-node02 57m 0% 544Mi 6%

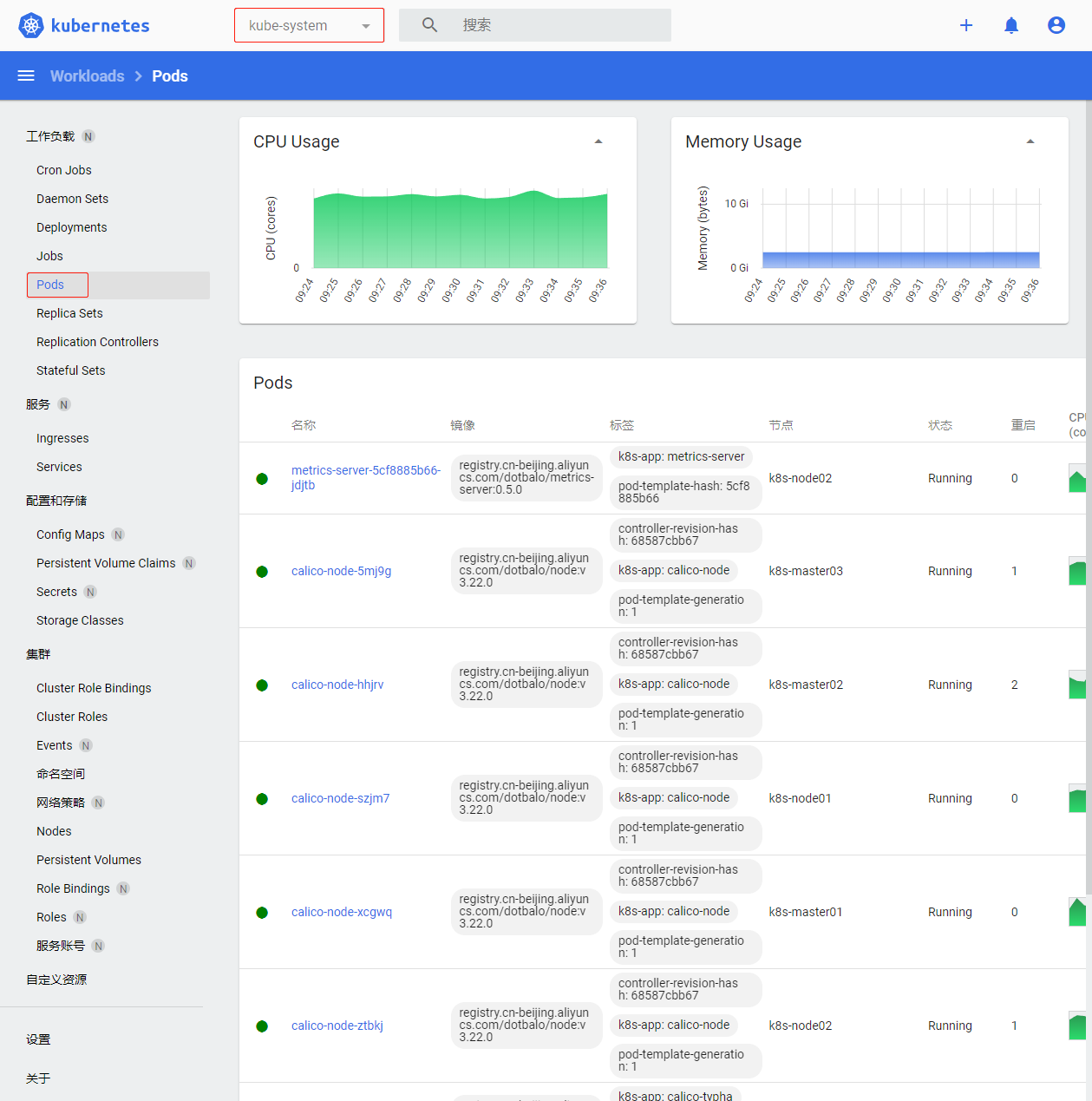

五、Dashboard部署¶

Dashboard 是基于网页的 Kubernetes 用户界面。 你可以使用 Dashboard 将容器应用部署到 Kubernetes 集群中,也可以对容器应用排错,还能管理集群资源。 你可以使用 Dashboard 获取运行在集群中的应用的概览信息,也可以创建或者修改 Kubernetes 资源 (如 Deployment,Job,DaemonSet 等等)。 例如,你可以对 Deployment 实现弹性伸缩、发起滚动升级、重启 Pod 或者使用向导创建新的应用。Dashboard 同时展示了 Kubernetes 集群中的资源状态信息和所有报错信息。

1.在Master01节点上操作安装Dashboard

$ cd /root/k8s-ha-install/dashboard/

$ kubectl create -f .

2.在Master01节点上查看Dashboard服务

$ kubectl get svc -n kubernetes-dashboard

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

dashboard-metrics-scraper ClusterIP 10.0.159.210 <none> 8000/TCP 2m6s

kubernetes-dashboard NodePort 10.0.241.159 <none> 443:31822/TCP 2m6s

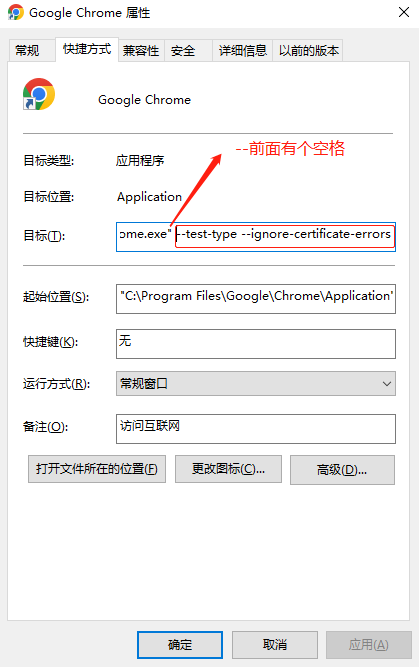

3.在谷歌浏览器(Chrome)启动文件中加入启动参数,用于解决无法访问Dashboard的问题

(1)右键谷歌浏览器(Chrome),选择【属性】

(2)在【目标】位置处添加下面参数,这里再次强调一下--test-type --ignore-certificate-errors前面有参数

--test-type --ignore-certificate-errors

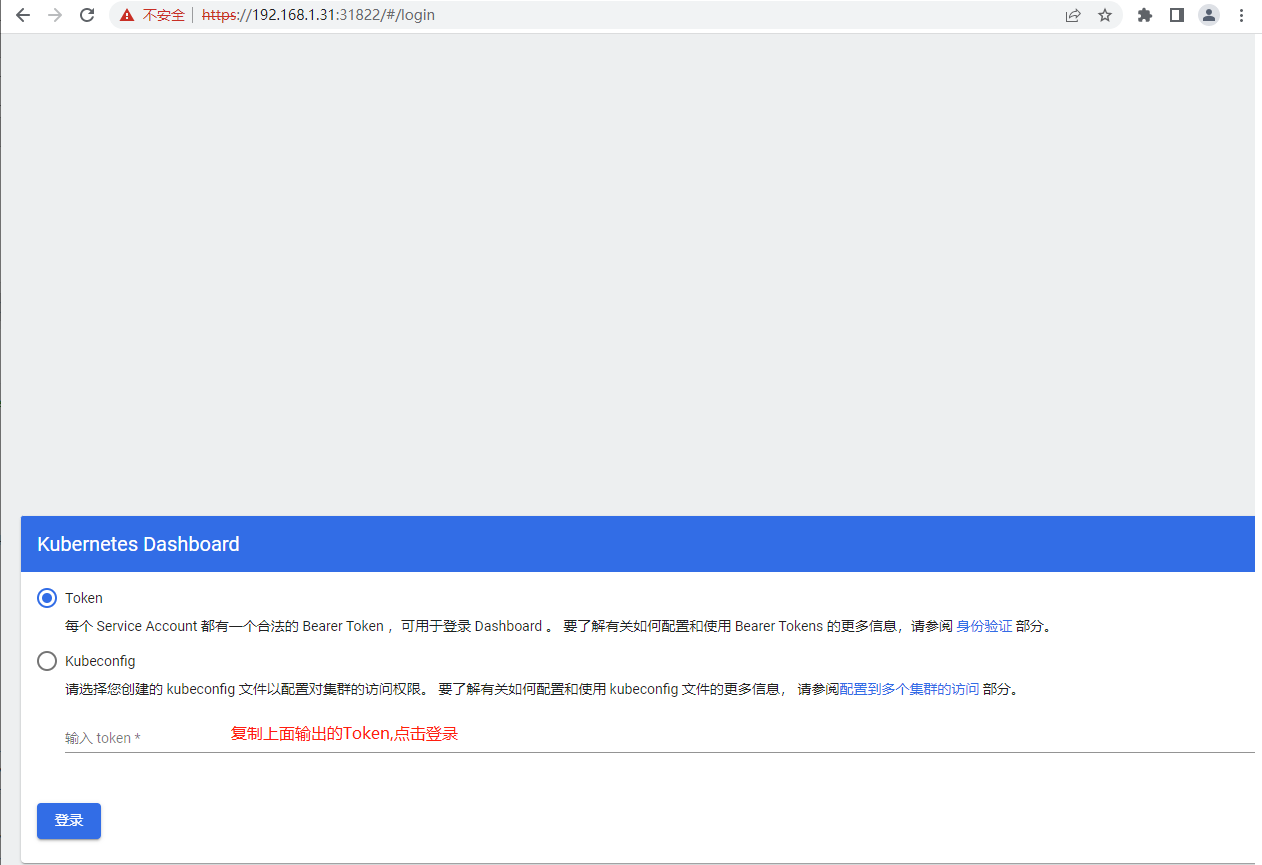

4.在Master01节点上查看token值

$ kubectl -n kube-system describe secret $(kubectl -n kube-system get secret | grep admin-user | awk '{print $1}')

Name: admin-user-token-8c67d

Namespace: kube-system

Labels: <none>

Annotations: kubernetes.io/service-account.name: admin-user

kubernetes.io/service-account.uid: 4ac00d20-af02-4373-a050-748c42a7110a

Type: kubernetes.io/service-account-token

Data

====

ca.crt: 1099 bytes

namespace: 11 bytes

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IjNia3dIcE1GVEJNMjBxeUt1ZzRwRzBfd3lLRGJGVTRVQmUzbzlQaW1BN28ifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLThjNjdkIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiI0YWMwMGQyMC1hZjAyLTQzNzMtYTA1MC03NDhjNDJhNzExMGEiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZS1zeXN0ZW06YWRtaW4tdXNlciJ9.VEW0f9YhSAjJl-WnA3B8mYA6gELTWQnTbjZPSFtU7CrPP4Nde6AU3PGJIoWs66cPKJ5AbhXkGx7HnZRF-3QlcmX5MVRf5JlZXn35rD3XB7bttGIkOcgxrrVVHeU_b_JOrrGnYRZAR1zogo3ueKGY_18YHOlSdUXFWZoV-i-cUux23ZDJvRq21QsqNiltUDAFy5HTlsEgAYh4Cx37gocZpJOvtKSzaz9RGNh4W6AFMXo0aMTHUyNTzeaw5-1D7fzOVPH-W12TvcveL1G3SMDMut6BEejDKqFBo6eCbE3sGR84iO0ojlgwm9UAGP7hQFKuUt7-gmmLTm7wuKM7V29VcA

5.打开谷歌浏览器(Chrome),输入https://任意节点IP:服务端口,这里以Master01节点为例

https://192.168.1.31:31822

6.切换命名命名空间为kube-system,默认defult命名空间没有资源

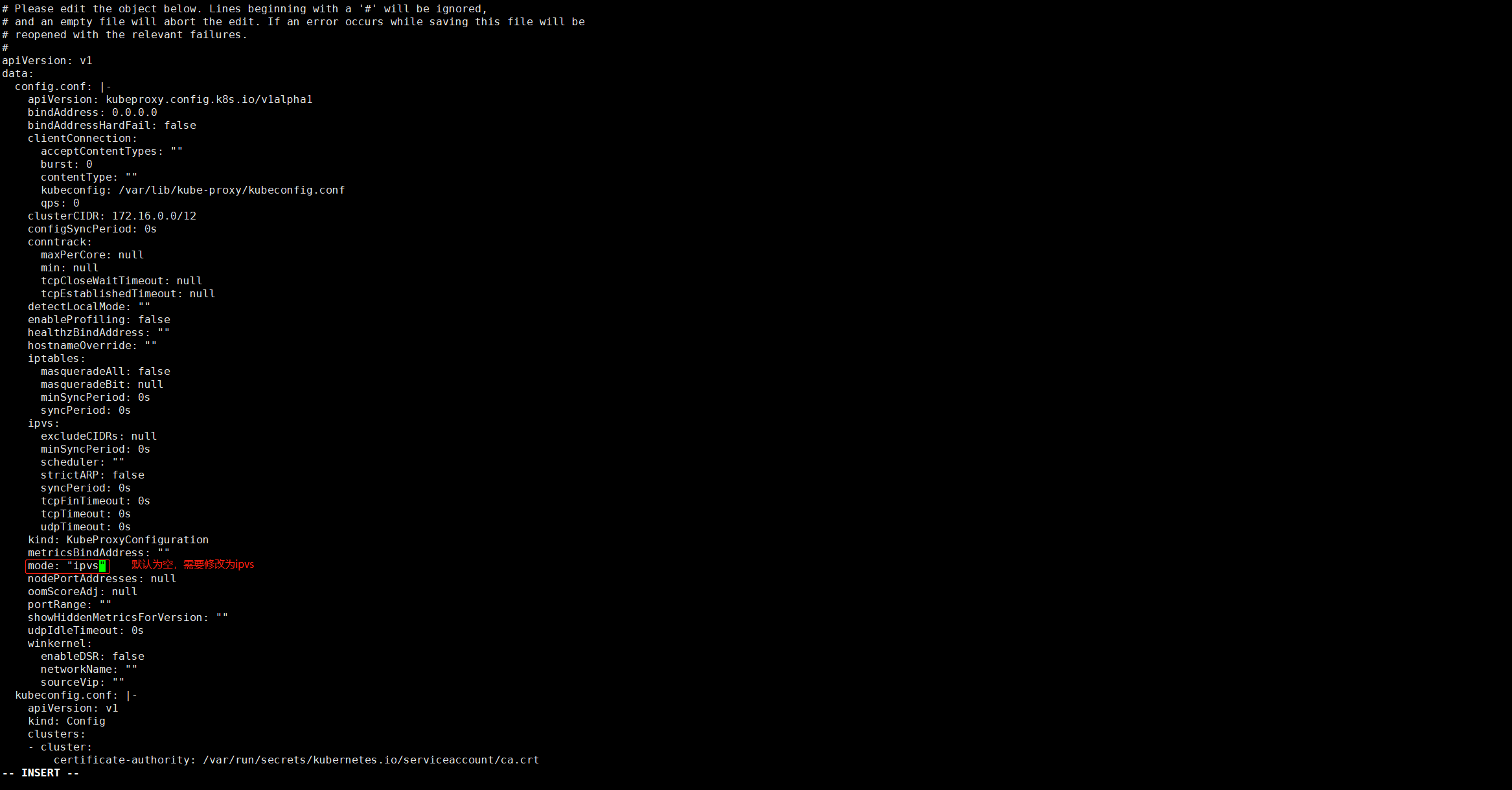

六、设置Kube-proxy模式为ipvs¶

1.在Master01节点上将Kube-proxy改为ipvs模式,默认是iptables

$ kubectl edit cm kube-proxy -n kube-system

2.在Master01节点上更新Kube-Proxy的Pod

$ kubectl patch daemonset kube-proxy -p "{\"spec\":{\"template\":{\"metadata\":{\"annotations\":{\"date\":\"`date +'%s'`\"}}}}}" -n kube-system

3.在Master01节点上查看kube-proxy滚动更新情况

$ kubectl get po -n kube-system | grep kube-proxy

kube-proxy-2kz9g 1/1 Running 0 58s

kube-proxy-b54gh 1/1 Running 0 63s

kube-proxy-kclcc 1/1 Running 0 61s

kube-proxy-pv8gc 1/1 Running 0 59s

kube-proxy-xt52m 1/1 Running 0 56s

4.在Master01节点上验证Kube-Proxy模式

$ curl 127.0.0.1:10249/proxyMode

ipvs

七、Kubectl自动补全¶

1.在Master01节点上开启kubectl自动补全

source <(kubectl completion bash)

echo "source <(kubectl completion bash)" >> ~/.bashrc

2.在Master01节点上为 kubectl 使用一个速记别名

$ alias k=kubectl

$ complete -o default -F __start_kubectl k