一、基本组件安装¶

1.1 Containerd作为Runtime¶

如果安装的版本低于1.24,选择Docker和Containerd均可,高于1.24选择Containerd作为Runtime。

1.在每台机器上执行以下命令安装docker-ce-20.10,注意这里安装docker时会把Containerd也装上

$ yum install docker-ce-20.10.* docker-ce-cli-20.10.* -y

2.在每台机器上执行以下命令配置Containerd所需的模块

$ cat <<EOF | sudo tee /etc/modules-load.d/containerd.conf

overlay

br_netfilter

EOF

3.在每台机器上执行以下命令加载模块

$ modprobe -- overlay

$ modprobe -- br_netfilter

4.在每台机器上执行以下命令配置Containerd所需的内核

$ cat <<EOF | sudo tee /etc/sysctl.d/99-kubernetes-cri.conf

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

EOF

5.在每台机器上执行以下命令加载内核

$ sysctl --system

6.在每台机器上执行以下命令配置Containerd的配置文件

$ mkdir -p /etc/containerd

$ containerd config default | tee /etc/containerd/config.toml

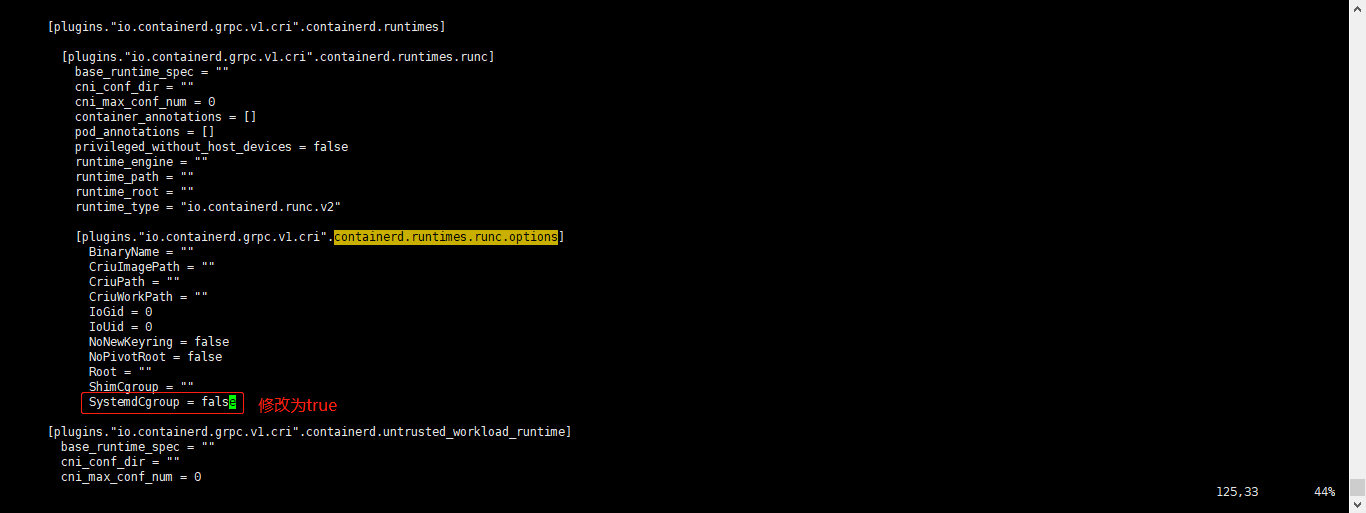

7.在每台机器上执行以下命令将Containerd的Cgroup改为Systemd,找到containerd.runtimes.runc.options,添加SystemdCgroup = true(如果已存在直接修改,否则会报错)

$ vim /etc/containerd/config.toml

...

...

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc.options]

BinaryName = ""

CriuImagePath = ""

CriuPath = ""

CriuWorkPath = ""

IoGid = 0

IoUid = 0

NoNewKeyring = false

NoPivotRoot = false

Root = ""

ShimCgroup = ""

SystemdCgroup = true

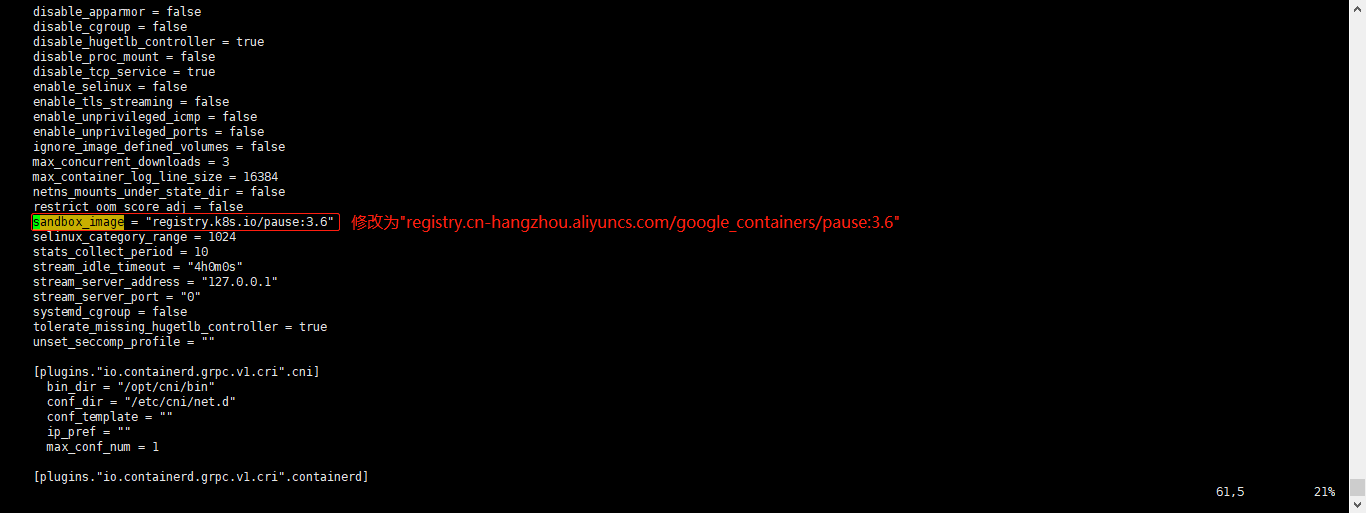

8.在每台机器上执行以下命令将sandbox_image的Pause镜像改成符合自己版本的地址registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.6

$ vim /etc/containerd/config.toml

#原本内容

sandbox_image = "registry.k8s.io/pause:3.6"

#修改后的内容

sandbox_image = "registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.6"

9.在每台机器上执行以下命令启动Containerd,并配置开机自启动

$ systemctl daemon-reload

$ systemctl enable --now containerd

$ ls /run/containerd/containerd.sock

/run/containerd/containerd.sock

10.在每台机器上执行以下命令配置crictl客户端连接的运行时位置

$ cat > /etc/crictl.yaml <<EOF

runtime-endpoint: unix:///run/containerd/containerd.sock

image-endpoint: unix:///run/containerd/containerd.sock

timeout: 10

debug: false

EOF

11.在每台机器上执行以下命令进行验证

$ ctr image ls

REF TYPE DIGEST SIZE PLATFORMS LABELS

1.2 k8s核心组件及Etcd安装¶

1.在Master01节点上下载kubernetes安装包

$ wget https://dl.k8s.io/v1.23.17/kubernetes-server-linux-amd64.tar.gz

2.在Master01节点上下载etcd安装包

$ wget https://github.com/etcd-io/etcd/releases/download/v3.4.1/etcd-v3.4.1-linux-amd64.tar.gz

3.在Master01节点上分别解压kubernetes安装包和etcd安装包

$ tar -xf kubernetes-server-linux-amd64.tar.gz --strip-components=3 -C /usr/local/bin kubernetes/server/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy}

$ tar -zxvf etcd-v3.4.1-linux-amd64.tar.gz --strip-components=1 -C /usr/local/bin etcd-v3.4.1-linux-amd64/etcd{,ctl}

4.在Master01节点上分别查看kubelet和etcdctl版本信息

$ kubelet --version

Kubernetes v1.23.17

$ etcdctl version

etcdctl version: 3.4.1

API version: 3.5

5.在Master01节点上将组件发送到其他节点

$ MasterNodes='k8s-master02 k8s-master03'

$ WorkNodes='k8s-node01 k8s-node02'

$ for NODE in $MasterNodes; do echo $NODE; scp /usr/local/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy} $NODE:/usr/local/bin/; scp /usr/local/bin/etcd* $NODE:/usr/local/bin/; done

$ for NODE in $WorkNodes; do scp /usr/local/bin/kube{let,-proxy} $NODE:/usr/local/bin/ ; done

6.在Master01节点上下载源码文件

$ git clone https://gitee.com/jeckjohn/k8s-ha-install.git

7.所有节点创建/opt/cni/bin目录

$ mkdir -p /opt/cni/bin

二、生成证书¶

首先在Master01节点上下载好生成证书工具

$ wget "https://pkg.cfssl.org/R1.2/cfssl_linux-amd64" -O /usr/local/bin/cfssl

$ wget "https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64" -O /usr/local/bin/cfssljson

$ chmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson

2.1 生成Etcd证书¶

1.所有Master节点创建etcd证书目录

$ mkdir -p /etc/etcd/ssl

2.所有节点创建kubernetes相关目录

$ mkdir -p /etc/kubernetes/pki

3.在Master01节点上生成etcd证书

(1)在Master01节点上切换1.23.x分支

$ cd /root/k8s-ha-install && git checkout manual-installation-v1.23.x

(2)在Master01节点上生成etcd CA证书

$ cd /root/k8s-ha-install/pki

$ cfssl gencert -initca etcd-ca-csr.json | cfssljson -bare /etc/etcd/ssl/etcd-ca

(3)在Master01节点上生成etcd CA证书的key

$ cfssl gencert \

-ca=/etc/etcd/ssl/etcd-ca.pem \

-ca-key=/etc/etcd/ssl/etcd-ca-key.pem \

-config=ca-config.json \

-hostname=127.0.0.1,k8s-master01,k8s-master02,k8s-master03,192.168.1.31,192.168.1.32,192.168.1.33 \

-profile=kubernetes \

etcd-csr.json | cfssljson -bare /etc/etcd/ssl/etcd

4.在Master01节点上将证书复制到其他节点

$ MasterNodes='k8s-master02 k8s-master03'

$ WorkNodes='k8s-node01 k8s-node02'

$ for NODE in $MasterNodes; do

ssh $NODE "mkdir -p /etc/etcd/ssl"

for FILE in etcd-ca-key.pem etcd-ca.pem etcd-key.pem etcd.pem; do

scp /etc/etcd/ssl/${FILE} $NODE:/etc/etcd/ssl/${FILE}

done

done

2.2 生成k8s组件证书¶

1.在Master01节点上生成kubernetes证书

$ cd /root/k8s-ha-install/pki

$ cfssl gencert -initca ca-csr.json | cfssljson -bare /etc/kubernetes/pki/ca

$ cfssl gencert -ca=/etc/kubernetes/pki/ca.pem -ca-key=/etc/kubernetes/pki/ca-key.pem -config=ca-config.json -hostname=10.0.0.1,192.168.1.38,127.0.0.1,kubernetes,kubernetes.default,kubernetes.default.svc,kubernetes.default.svc.cluster,kubernetes.default.svc.cluster.local,192.168.1.31,192.168.1.32,192.168.1.33 -profile=kubernetes apiserver-csr.json | cfssljson -bare /etc/kubernetes/pki/apiserver

2.在Master01节点上生成apiserver的聚合证书

$ cd /root/k8s-ha-install/pki

$ cfssl gencert -initca front-proxy-ca-csr.json | cfssljson -bare /etc/kubernetes/pki/front-proxy-ca

$ cfssl gencert -ca=/etc/kubernetes/pki/front-proxy-ca.pem -ca-key=/etc/kubernetes/pki/front-proxy-ca-key.pem -config=ca-config.json -profile=kubernetes front-proxy-client-csr.json | cfssljson -bare /etc/kubernetes/pki/front-proxy-client

3.在Master01节点上生成controller-manage的证书

$ cd /root/k8s-ha-install/pki

$ cfssl gencert \

-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \

-config=ca-config.json \

-profile=kubernetes \

manager-csr.json | cfssljson -bare /etc/kubernetes/pki/controller-manager

$ kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=https://192.168.1.38:8443 \

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

$ kubectl config set-context system:kube-controller-manager@kubernetes \

--cluster=kubernetes \

--user=system:kube-controller-manager \

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

$ kubectl config set-credentials system:kube-controller-manager \

--client-certificate=/etc/kubernetes/pki/controller-manager.pem \

--client-key=/etc/kubernetes/pki/controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

$ kubectl config use-context system:kube-controller-manager@kubernetes \

--kubeconfig=/etc/kubernetes/controller-manager.kubeconfig

$ cfssl gencert \

-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \

-config=ca-config.json \

-profile=kubernetes \

scheduler-csr.json | cfssljson -bare /etc/kubernetes/pki/scheduler

$ kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/pki/ca.pem \

--embed-certs=true \

--server=https://192.168.1.38:8443 \

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

$ kubectl config set-credentials system:kube-scheduler \

--client-certificate=/etc/kubernetes/pki/scheduler.pem \

--client-key=/etc/kubernetes/pki/scheduler-key.pem \

--embed-certs=true \

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

$ kubectl config set-context system:kube-scheduler@kubernetes \

--cluster=kubernetes \

--user=system:kube-scheduler \

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

$ kubectl config use-context system:kube-scheduler@kubernetes \

--kubeconfig=/etc/kubernetes/scheduler.kubeconfig

$ cfssl gencert \

-ca=/etc/kubernetes/pki/ca.pem \

-ca-key=/etc/kubernetes/pki/ca-key.pem \

-config=ca-config.json \

-profile=kubernetes \

admin-csr.json | cfssljson -bare /etc/kubernetes/pki/admin

$ kubectl config set-cluster kubernetes --certificate-authority=/etc/kubernetes/pki/ca.pem --embed-certs=true --server=https://192.168.1.38:8443 --kubeconfig=/etc/kubernetes/admin.kubeconfig

kubectl config set-credentials kubernetes-admin --client-certificate=/etc/kubernetes/pki/admin.pem --client-key=/etc/kubernetes/pki/admin-key.pem --embed-certs=true --kubeconfig=/etc/kubernetes/admin.kubeconfig

$ kubectl config set-context kubernetes-admin@kubernetes --cluster=kubernetes --user=kubernetes-admin --kubeconfig=/etc/kubernetes/admin.kubeconfig

$ kubectl config use-context kubernetes-admin@kubernetes --kubeconfig=/etc/kubernetes/admin.kubeconfig

4.在Master01节点上生成私钥和公钥

$ openssl genrsa -out /etc/kubernetes/pki/sa.key 2048

$ openssl rsa -in /etc/kubernetes/pki/sa.key -pubout -out /etc/kubernetes/pki/sa.pub

5.在Master01节点上发送证书至其他节点

for NODE in k8s-master02 k8s-master03; do

for FILE in $(ls /etc/kubernetes/pki | grep -v etcd); do

scp /etc/kubernetes/pki/${FILE} $NODE:/etc/kubernetes/pki/${FILE};

done;

for FILE in admin.kubeconfig controller-manager.kubeconfig scheduler.kubeconfig; do

scp /etc/kubernetes/${FILE} $NODE:/etc/kubernetes/${FILE};

done;

done

6.验证证书文件

$ ls /etc/kubernetes/pki/

admin.csr ca.csr front-proxy-ca.csr sa.key

admin-key.pem ca-key.pem front-proxy-ca-key.pem sa.pub

admin.pem ca.pem front-proxy-ca.pem scheduler.csr

apiserver.csr controller-manager.csr front-proxy-client.csr scheduler-key.pem

apiserver-key.pem controller-manager-key.pem front-proxy-client-key.pem scheduler.pem

apiserver.pem controller-manager.pem front-proxy-client.pem

$ ls /etc/kubernetes/pki/ | wc -l

23