一、开启 prometheus 指标收集¶

1.1 通过 helm 方式修改¶

说明:可以参考前面章节完成apisix部署

helm install apisix apisix/apisix \

--set apisix.ssl.enabled=true \

--set service.type=LoadBalancer \

--set ingress-controller.enabled=true \

--namespace ingress-apisix \

--set dashboard.enabled=true \

--set ingress-controller.config.apisix.serviceNamespace=ingress-apisix \

--set timezone=Asia/Shanghai \

--set apisix.prometheus.enabled=true

1.2 修改 values.yaml 文件¶

[root@master01 ~]# cd /root/16/apisix/

[root@master01 apisix]# vim values.yaml

#修改第472行内容

472 enabled: true

修改后完整配置文件

[root@master01 apisix]# egrep -v "#|^$" values.yaml

global:

imagePullSecrets: []

image:

repository: registry.cn-hangzhou.aliyuncs.com/abroad_images/apisix

pullPolicy: IfNotPresent

tag: 3.8.0-debian

useDaemonSet: false

replicaCount: 1

priorityClassName: ""

podAnnotations: {}

podSecurityContext: {}

securityContext: {}

podDisruptionBudget:

enabled: false

minAvailable: 90%

maxUnavailable: 1

resources: {}

hostNetwork: false

nodeSelector: {}

tolerations: []

affinity: {}

timezone: "Asia/Shanghai"

extraEnvVars: []

updateStrategy: {}

extraDeploy: []

extraVolumes: []

extraVolumeMounts: []

extraInitContainers: []

extraContainers: []

initContainer:

image: registry.cn-hangzhou.aliyuncs.com/abroad_images/busybox

tag: 1.28

autoscaling:

enabled: false

version: v2

minReplicas: 1

maxReplicas: 100

targetCPUUtilizationPercentage: 80

targetMemoryUtilizationPercentage: 80

nameOverride: ""

fullnameOverride: ""

serviceAccount:

create: false

annotations: {}

name: ""

rbac:

create: false

service:

type: LoadBalancer

externalTrafficPolicy: Cluster

externalIPs: []

http:

enabled: true

servicePort: 80

containerPort: 9080

additionalContainerPorts: []

tls:

servicePort: 443

stream:

enabled: false

tcp: []

udp: []

labelsOverride: {}

ingress:

enabled: false

servicePort:

annotations: {}

hosts:

- host: apisix.local

paths: []

tls: []

metrics:

serviceMonitor:

enabled: true

namespace: "ingress-apisix"

name: ""

interval: 15s

labels: {}

annotations: {}

apisix:

enableIPv6: true

enableServerTokens: true

setIDFromPodUID: false

luaModuleHook:

enabled: false

luaPath: ""

hookPoint: ""

configMapRef:

name: ""

mounts:

- key: ""

path: ""

ssl:

enabled: true

containerPort: 9443

additionalContainerPorts: []

existingCASecret: ""

certCAFilename: ""

http2:

enabled: true

sslProtocols: "TLSv1.2 TLSv1.3"

fallbackSNI: ""

router:

http: radixtree_host_uri

fullCustomConfig:

enabled: false

config: {}

deployment:

mode: traditional

role: "traditional"

admin:

enabled: true

type: ClusterIP

externalIPs: []

ip: 0.0.0.0

port: 9180

servicePort: 9180

cors: true

credentials:

admin: <admin-api-key>

viewer: <viewer-api-key>

secretName: ""

allow:

ipList:

- 127.0.0.1/24

ingress:

enabled: false

annotations:

{}

hosts:

- host: apisix-admin.local

paths:

- "/apisix"

tls: []

nginx:

workerRlimitNofile: "20480"

workerConnections: "10620"

workerProcesses: auto

enableCPUAffinity: true

keepaliveTimeout: 60s

envs: []

logs:

enableAccessLog: true

accessLog: "/dev/stdout"

accessLogFormat: '$remote_addr - $remote_user [$time_local] $http_host \"$request\" $status $body_bytes_sent $request_time \"$http_referer\" \"$http_user_agent\" $upstream_addr $upstream_status $upstream_response_time \"$upstream_scheme://$upstream_host$upstream_uri\"'

accessLogFormatEscape: default

errorLog: "/dev/stderr"

errorLogLevel: "warn"

configurationSnippet:

main: |

httpStart: |

httpEnd: |

httpSrv: |

httpAdmin: |

stream: |

customLuaSharedDicts: []

discovery:

enabled: false

registry: {}

dns:

resolvers:

- 127.0.0.1

- 172.20.0.10

- 114.114.114.114

- 223.5.5.5

- 1.1.1.1

- 8.8.8.8

validity: 30

timeout: 5

vault:

enabled: false

host: ""

timeout: 10

token: ""

prefix: ""

prometheus:

enabled: true

path: /apisix/prometheus/metrics

metricPrefix: apisix_

containerPort: 9091

plugins: []

stream_plugins: []

pluginAttrs: {}

extPlugin:

enabled: false

cmd: ["/path/to/apisix-plugin-runner/runner", "run"]

wasm:

enabled: false

plugins: []

customPlugins:

enabled: false

luaPath: "/opts/custom_plugins/?.lua"

plugins:

- name: "plugin-name"

attrs: {}

configMap:

name: "configmap-name"

mounts:

- key: "the-file-name"

path: "mount-path"

externalEtcd:

host:

- http://etcd.host:2379

user: root

password: ""

existingSecret: ""

secretPasswordKey: "etcd-root-password"

etcd:

enabled: true

prefix: "/apisix"

timeout: 30

auth:

rbac:

create: false

rootPassword: ""

tls:

enabled: false

existingSecret: ""

certFilename: ""

certKeyFilename: ""

verify: true

sni: ""

service:

port: 2379

replicaCount: 3

dashboard:

enabled: true

config:

conf:

etcd:

endpoints:

- apisix-etcd:2379

prefix: "/apisix"

username: ~

password: ~

ingress-controller:

enabled: true

config:

apisix:

adminAPIVersion: "v3"

serviceNamespace: "ingress-apisix"

更新部署

[root@master01 apisix]#

helm upgrade apisix /root/16/apisix \

-n ingress-apisix \

-f values.yaml

验证

# apisix这个pod会自动重启

[root@master01 apisix]# kgp -n ingress-apisix | grep apisix-

apisix-944f5f799-8trxf 1/1 Running 0 77s

apisix-dashboard-766d98954c-s7m6n 1/1 Running 3 (4h59m ago) 5h

apisix-etcd-0 1/1 Running 0 4m31s

apisix-etcd-1 1/1 Running 0 5m54s

apisix-etcd-2 1/1 Running 0 7m4s

apisix-ingress-controller-559f594fc9-w8rhv 1/1 Running 0 5h

1.3 测试验证¶

#查看apisix-prometheus-metrics服务

[root@master01 apisix]# kubectl get svc -ningress-apisix |grep prometheus

apisix-prometheus-metrics ClusterIP <metrics-service-ip> <none> 9091/TCP 2m15s

#通过接口的方式验证

[root@master01 apisix]# curl <metrics-service-ip>:9091/apisix/prometheus/metrics

# HELP apisix_etcd_modify_indexes Etcd modify index for APISIX keys

# TYPE apisix_etcd_modify_indexes gauge

apisix_etcd_modify_indexes{key="consumers"} 57

apisix_etcd_modify_indexes{key="global_rules"} 0

apisix_etcd_modify_indexes{key="max_modify_index"} 128

apisix_etcd_modify_indexes{key="prev_index"} 145

apisix_etcd_modify_indexes{key="protos"} 0

apisix_etcd_modify_indexes{key="routes"} 128

apisix_etcd_modify_indexes{key="services"} 0

apisix_etcd_modify_indexes{key="ssls"} 37

apisix_etcd_modify_indexes{key="stream_routes"} 0

apisix_etcd_modify_indexes{key="upstreams"} 91

apisix_etcd_modify_indexes{key="x_etcd_index"} 147

...

...

1.4 创建 metrics 服务暴露¶

# 定义资源

[root@master01 16]# vim metrics-ing.yaml

apiVersion: apisix.apache.org/v2

kind: ApisixRoute

metadata:

name: metrics-route

namespace: ingress-apisix

spec:

http:

- backends:

- serviceName: apisix-prometheus-metrics

servicePort: 9091

match:

hosts:

- metrics.example.com

paths:

- /apisix/prometheus/metrics

name: metrics-route

# 应用

[root@master01 16]# kaf metrics-ing.yaml

# 查看

[root@master01 16]# kg -f metrics-ing.yaml

NAME HOSTS URIS AGE

metrics-route ["metrics.example.com"] ["/apisix/prometheus/metrics"] 32s

测试验证:

[root@master01 16]# curl metrics.example.com/apisix/prometheus/metrics

# HELP apisix_etcd_modify_indexes Etcd modify index for APISIX keys

# TYPE apisix_etcd_modify_indexes gauge

apisix_etcd_modify_indexes{key="consumers"} 57

apisix_etcd_modify_indexes{key="global_rules"} 0

apisix_etcd_modify_indexes{key="max_modify_index"} 149

apisix_etcd_modify_indexes{key="prev_index"} 145

apisix_etcd_modify_indexes{key="protos"} 0

apisix_etcd_modify_indexes{key="routes"} 149

apisix_etcd_modify_indexes{key="services"} 0

apisix_etcd_modify_indexes{key="ssls"} 37

apisix_etcd_modify_indexes{key="stream_routes"} 0

apisix_etcd_modify_indexes{key="upstreams"} 148

apisix_etcd_modify_indexes{key="x_etcd_index"} 149

...

...

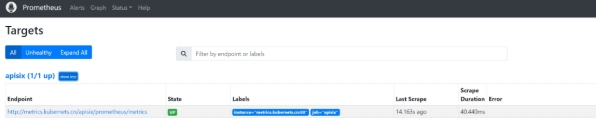

二、配置 Prometheus 数据采集¶

将该 URI 地址添加到 Prometheus 中来提取指标数据,配置示例如下:

# 添加如下内容

########## apisix 监控配置 ##########

- job_name: "apisix"

scrape_interval: 15s #该值会跟 Prometheus QL 中 rate 函数的时间范围有关系,rate 函数中的时间范围应该至少两倍于该值。

metrics_path: "/apisix/prometheus/metrics"

static_configs:

- targets: [metrics.example.com]

# 完整配置文件

[root@master01 ~]# cd /root/7

[root@master01 7]# cat prometheus-config.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-config

namespace: monitor

data:

prometheus.yml: |

global:

scrape_interval: 15s

evaluation_interval: 15s

external_labels:

cluster: "kubernetes"

############ 添加配置 Aertmanager 服务器地址 ###################

alerting:

alertmanagers:

- static_configs:

- targets: ["alertmanager:9093"]

############ 数据采集job ###################

scrape_configs:

########## prometheus 监控配置 ##########

- job_name: prometheus

static_configs:

- targets: ['127.0.0.1:9090']

labels:

instance: prometheus

########## apisix 监控配置 ##########

- job_name: "apisix"

scrape_interval: 15s

metrics_path: "/apisix/prometheus/metrics"

static_configs:

- targets: [metrics.example.com]

########## minio 监控配置 ##########

- job_name: minio-job

bearer_token: <prometheus-bearer-token>

metrics_path: /minio/v2/metrics/cluster

scheme: http

static_configs:

- targets: [s3.example.com]

########## kube-apiserver 监控配置 ##########

- job_name: kube-apiserver

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name]

action: keep

regex: default;kubernetes

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: service

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

########## kube-controller-manager 监控配置 ##########

- job_name: 'kube-controller-manager'

# 使用 Kubernetes Pod 发现机制

kubernetes_sd_configs:

- role: pod

# 强制使用 HTTPS 协议

scheme: https

# TLS 配置(测试环境跳过验证)

tls_config:

insecure_skip_verify: true

# 使用 ServiceAccount 的 Token 认证

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

# 保留标签为 component=kube-controller-manager 的 Pod

- source_labels: [__meta_kubernetes_pod_label_component]

regex: kube-controller-manager

action: keep

# 重写目标地址为 Pod IP + 10257 端口

- source_labels: [__meta_kubernetes_pod_ip]

regex: (.+)

target_label: __address__

replacement: "${1}:10257"

# 强制使用 HTTPS 协议(冗余但明确)

- source_labels: []

regex: .*

target_label: __scheme__

replacement: https

# 附加元数据标签

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: service

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

########## kube-scheduler 监控配置 ##########

- job_name: 'kube-scheduler'

kubernetes_sd_configs:

- role: pod

scheme: https

tls_config:

insecure_skip_verify: true

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_pod_label_component]

regex: kube-scheduler

action: keep

- source_labels: [__meta_kubernetes_pod_ip]

regex: (.+)

target_label: __address__

replacement: "${1}:10259"

- source_labels: []

regex: .*

target_label: __scheme__

replacement: https

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: service

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

########## kube-state-metrics 监控配置 ##########

- job_name: kube-state-metrics

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_name]

regex: kube-state-metrics

action: keep

- source_labels: [__meta_kubernetes_pod_ip]

regex: (.+)

target_label: __address__

replacement: ${1}:8080

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: service

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

########## coredns 监控配置 ##########

- job_name: coredns

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels:

- __meta_kubernetes_service_label_k8s_app

regex: kube-dns

action: keep

- source_labels: [__meta_kubernetes_pod_ip]

regex: (.+)

target_label: __address__

replacement: ${1}:9153

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: service

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

########## etcd 监控配置 ##########

- job_name: etcd

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels:

- __meta_kubernetes_pod_label_component

regex: etcd

action: keep

- source_labels: [__meta_kubernetes_pod_ip]

regex: (.+)

target_label: __address__

replacement: ${1}:2381

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

########## kubelet 监控配置 ##########

- job_name: kubelet

metrics_path: /metrics/cadvisor

scheme: https

tls_config:

insecure_skip_verify: true

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

- role: node

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

########## k8s-node 监控配置 ##########

- job_name: k8s-nodes

kubernetes_sd_configs:

- role: node

relabel_configs:

- source_labels: [__address__]

regex: '(.*):10250'

replacement: '${1}:9100'

target_label: __address__

action: replace

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

########## DNS 监控配置 ##########

- job_name: "kubernetes-dns"

metrics_path: /probe # 不是metrics,是probe

params:

module: [dns_tcp] # 使用DNS TCP模块

static_configs:

- targets:

- kube-dns.kube-system:53 #不要省略端口号

- 8.8.4.4:53

- 8.8.8.8:53

- 223.5.5.5:53

relabel_configs:

- source_labels: [__address__]

target_label: __param_target

- source_labels: [__param_target]

target_label: instance

- target_label: __address__

replacement: blackbox-exporter.monitor:9115 # 服务地址,和上面的 Service 定义保持一致

########## ICMP 监控配置 ##########

- job_name: icmp-status

metrics_path: /probe

params:

module: [icmp]

static_configs:

- targets:

- <node-ip-1>

labels:

group: icmp

relabel_configs:

- source_labels: [__address__]

target_label: __param_target

- source_labels: [__param_target]

target_label: instance

- target_label: __address__

replacement: blackbox-exporter.monitor:9115

########## HTTP 监控配置 ##########

- job_name: 'kubernetes-services'

metrics_path: /probe

params:

module: ## 使用HTTP_GET_2xx与HTTP_GET_3XX模块

- "http_get_2xx"

- "http_get_3xx"

kubernetes_sd_configs: ## 使用Kubernetes动态服务发现,且使用Service类型的发现

- role: service

relabel_configs: ## 设置只监测Kubernetes Service中Annotation里配置了注解prometheus.io/http_probe: true的service

- action: keep

source_labels: [__meta_kubernetes_service_annotation_prometheus_io_http_probe]

regex: "true"

- action: replace

source_labels:

- "__meta_kubernetes_service_name"

- "__meta_kubernetes_namespace"

- "__meta_kubernetes_service_annotation_prometheus_io_http_probe_port"

- "__meta_kubernetes_service_annotation_prometheus_io_http_probe_path"

target_label: __param_target

regex: (.+);(.+);(.+);(.+)

replacement: $1.$2:$3$4

- target_label: __address__

replacement: blackbox-exporter.monitor:9115 ## BlackBox Exporter 的 Service 地址

- source_labels: [__param_target]

target_label: instance

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

target_label: kubernetes_name

########## TCP 监控配置 ##########

- job_name: "service-tcp-probe"

scrape_interval: 1m

metrics_path: /probe

# 使用blackbox exporter配置文件的tcp_connect的探针

params:

module: [tcp_connect]

kubernetes_sd_configs:

- role: service

relabel_configs:

# 保留prometheus.io/scrape: "true"和prometheus.io/tcp-probe: "true"的service

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape, __meta_kubernetes_service_annotation_prometheus_io_tcp_probe]

action: keep

regex: true;true

# 将原标签名__meta_kubernetes_service_name改成service_name

- source_labels: [__meta_kubernetes_service_name]

action: replace

regex: (.*)

target_label: service_name

# 将原标签名__meta_kubernetes_service_name改成service_name

- source_labels: [__meta_kubernetes_namespace]

action: replace

regex: (.*)

target_label: namespace

# 将instance改成 `clusterIP:port` 地址

- source_labels: [__meta_kubernetes_service_cluster_ip, __meta_kubernetes_service_annotation_prometheus_io_http_probe_port]

action: replace

regex: (.*);(.*)

target_label: __param_target

replacement: $1:$2

- source_labels: [__param_target]

target_label: instance

# 将__address__的值改成 `blackbox-exporter.monitor:9115`

- target_label: __address__

replacement: blackbox-exporter.monitor:9115

########## Ingress 监控配置 ##########

- job_name: 'blackbox-k8s-ingresses'

scrape_interval: 30s

scrape_timeout: 10s

metrics_path: /probe

params:

module: [http_get_2xx] # 使用定义的http模块

kubernetes_sd_configs:

- role: ingress # ingress 类型的服务发现

relabel_configs:

# 只有ingress的annotation中配置了 prometheus.io/http_probe=true 的才进行发现

- source_labels: [__meta_kubernetes_ingress_annotation_prometheus_io_http_probe]

action: keep

regex: true

- source_labels: [__meta_kubernetes_ingress_scheme,__address__,__meta_kubernetes_ingress_path]

regex: (.+);(.+);(.+)

replacement: ${1}://${2}${3}

target_label: __param_target

- target_label: __address__

replacement: blackbox-exporter.monitor:9115

- source_labels: [__param_target]

target_label: instance

- action: labelmap

regex: __meta_kubernetes_ingress_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_ingress_name]

target_label: kubernetes_name

########## 外部域名 监控配置 ##########

- job_name: "blackbox-external-website"

scrape_interval: 30s

scrape_timeout: 15s

metrics_path: /probe

params:

module: [http_get_2xx]

static_configs:

- targets:

- https://www.baidu.com # 改为公司对外服务的域名

- https://www.jd.com

relabel_configs:

- source_labels: [__address__]

target_label: __param_target

- source_labels: [__param_target]

target_label: instance

- target_label: __address__

replacement: blackbox-exporter.monitor:9115

########## 云上ECS 监控配置 ##########

- job_name: 'other-ECS'

static_configs:

- targets: ['101.201.68.158:9100']

labels:

hostname: 'test-node-exporter'

########## 进程 监控配置 ##########

- job_name: 'process-exporter'

static_configs:

- targets: ['<node-ip-2>:9256']

########## Mysql 监控配置 ##########

- job_name: 'mysql-exporter'

static_configs:

- targets: ['<node-ip-2>:9104']

########## Consul 监控配置 ##########

- job_name: consul

honor_labels: true

metrics_path: /metrics

scheme: http

consul_sd_configs: #基于consul服务发现的配置

- server: <node-ip-2>:18500 #consul的监听地址

services: [] #匹配consul中所有的service

relabel_configs: #relabel_configs下面都是重写标签相关配置

- source_labels: ['__meta_consul_tags'] #将__meta_consul_tags标签的至赋值给product

target_label: 'servername'

- source_labels: ['__meta_consul_dc'] #将__meta_consul_dc的值赋值给idc

target_label: 'idc'

- source_labels: ['__meta_consul_service']

regex: "consul" #匹配为"consul"的service

action: drop #执行的动作为删除

############ 指定告警规则文件路径位置 ###################

rule_files:

- /etc/prometheus/rules/*.rules

# 应用

[root@master01 7]# kaf prometheus-config.yaml

热重载 Prometheus 服务:

[root@master01 7]# curl -XPOST prometheus.example.com/-/reload

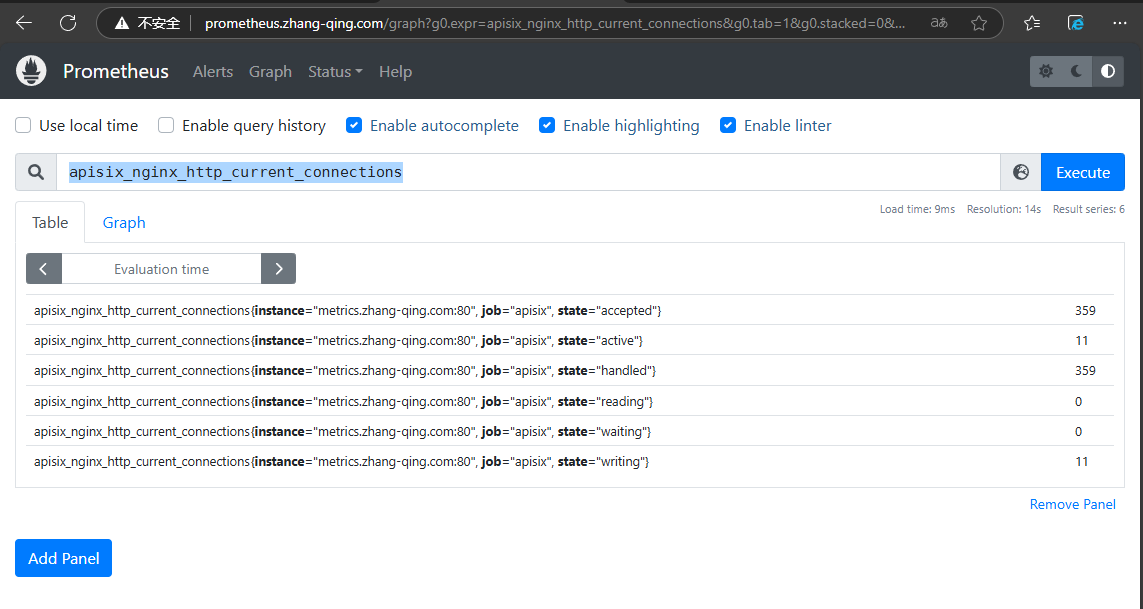

指标查询:

# 输入指标后,点击Excute

apisix_nginx_http_current_connections

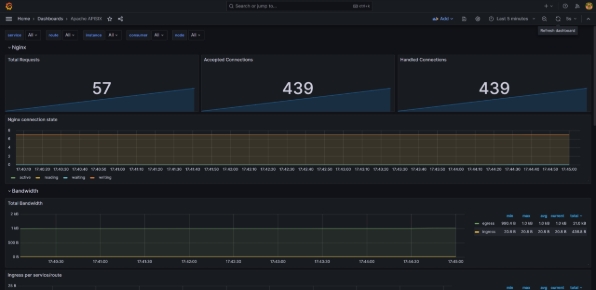

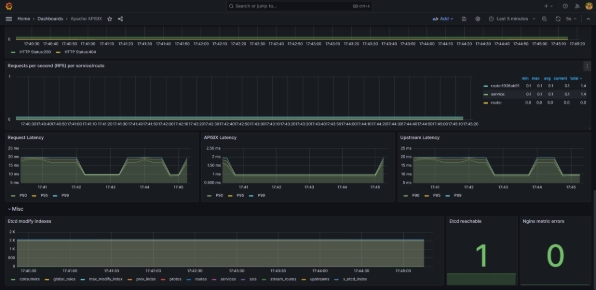

三、使用 Grafana 绘制指标¶

prometheus 插件导出的指标可以在 Grafana 进行图形化绘制显示。

如果需要进行设置,请下载APISIX's Grafana dashboard 元数据并导入到Grafana 中。

你可以到 Grafana 官方 下载 Grafana 元数据。

这里展示的模板ID为11719

四、Apisix webUI 大盘¶

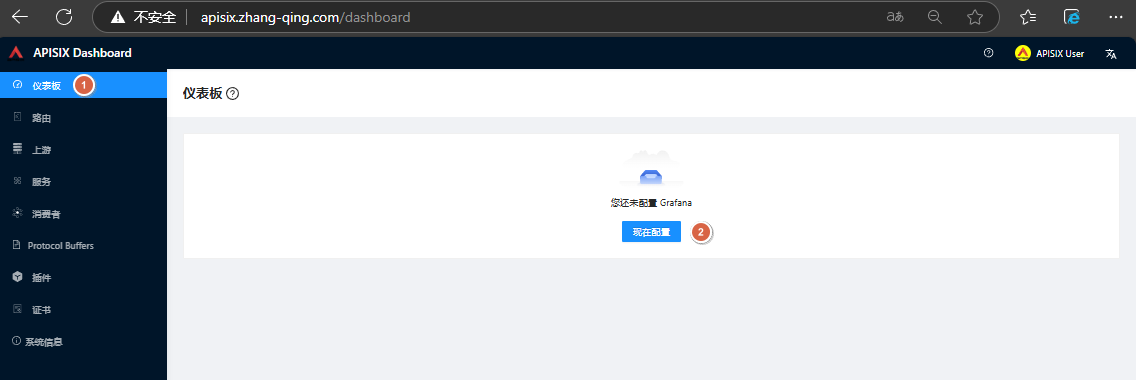

浏览器输入http://apisix.example.com/,账号密码都为admin。点击【仪表板】-【现在配置】

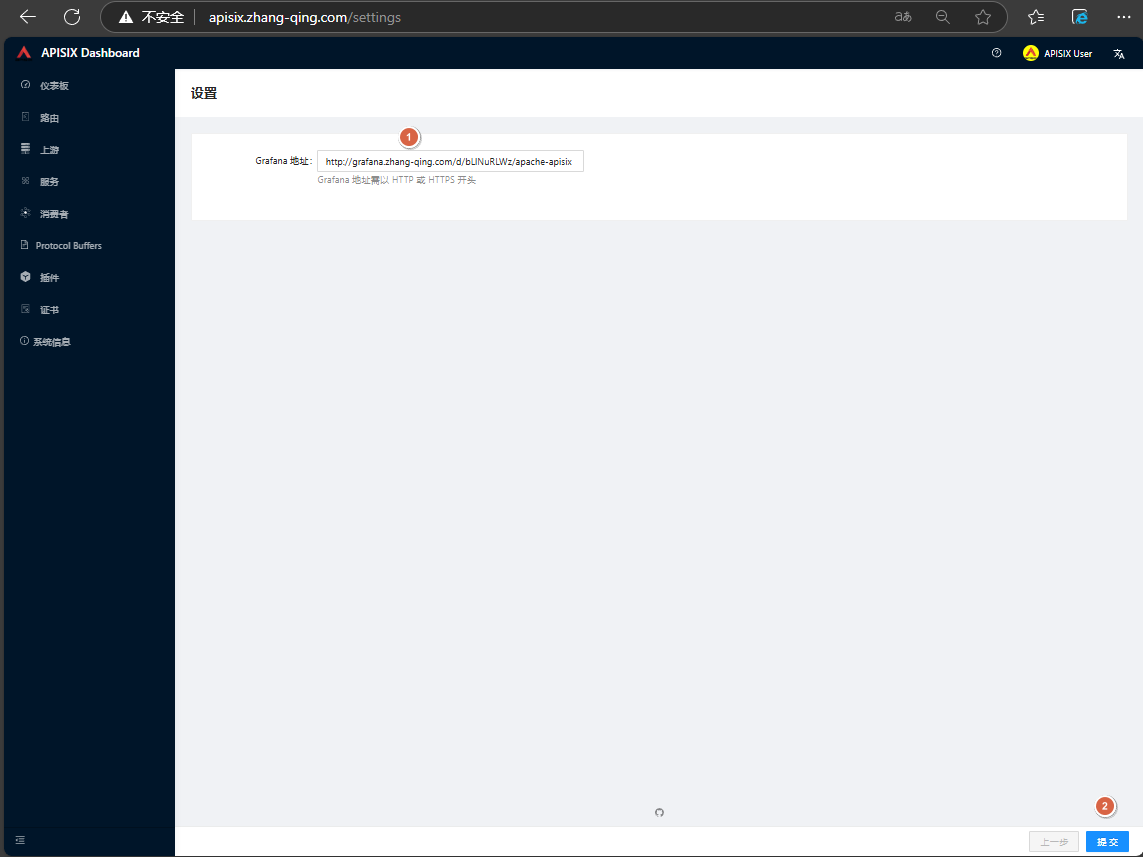

填写grafana地址:http://grafana.example.com/d/bLlNuRLWz/apache-apisix后,点击【提交】

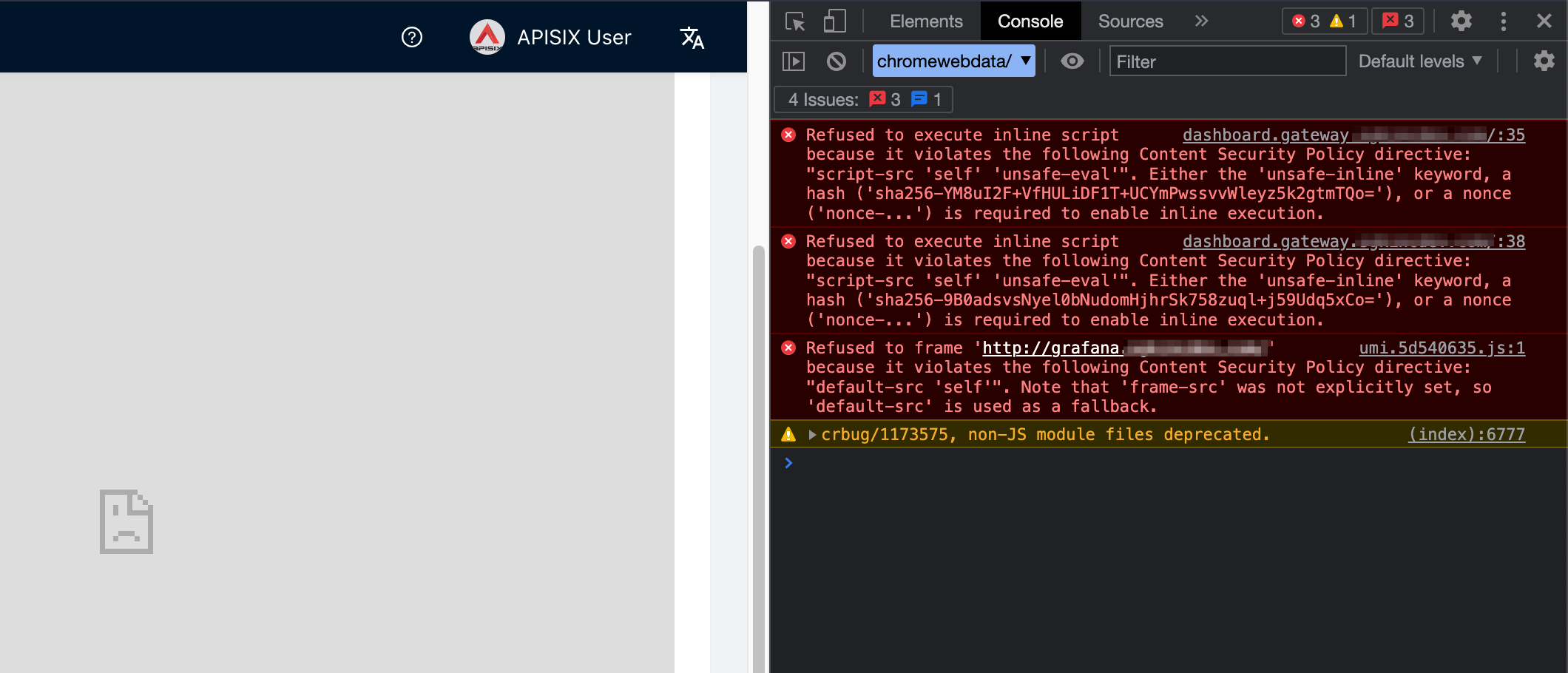

此时仪表板呈现空白界面

修改 grafana-config.yaml 文件:

[root@master01 7]# vim grafana-config.yaml

# 添加下面内容

[security]

allow_embedding = true

# 完整配置文件

[root@master01 7]# cat grafana-config.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: grafana-config

namespace: monitor

data:

grafana.ini: |

[server]

root_url = http://grafana.example.com

[smtp]

enabled = true

#企业邮箱使用smtp.exmail.qq.com:465,个人邮箱使用smtp.qq.com:465

host = smtp.qq.com:465

user = 1904763431@qq.com

password = xdjdwczivdfpcbhj

skip_verify = true

from_address = 1904763431@qq.com

[alerting]

enabled = true

execute_alerts = true

[security]

allow_embedding = true

重启 grafana

[root@master01 16]# kubectl rollout restart deploy grafana-core -n monitor

# 查看

[root@master01 7]# kgp -n monitor | grep grafana-core

grafana-core-54c48855c9-vvbp5 1/1 Running 0 11m

修改 apisix-dashboard configmap 文件:

[root@master01 16]# kubectl edit cm apisix-dashboard -ningress-apisix

apiVersion: v1

data:

conf.yaml: |-

conf:

listen:

host: 0.0.0.0

port: 9000

etcd:

prefix: "/apisix"

endpoints:

- apisix-etcd:2379

##增加如下部分

security:

access_control_allow_credentials: true # support using custom cors configration

access_control_allow_headers: "Authorization"

access_control-allow_methods: "*"

x_frame_options: "allow-from *"

content_security_policy: "frame-src *"

...

...

重启 apisix-dashboar:

[root@master01 16]# kubectl rollout restart deploy apisix-dashboard -ningress-apisix

# 查看

[root@master01 16]# kgp -ningress-apisix | grep apisix-dashboard

apisix-dashboard-5997fcf75-m6cnb 1/1 Running 0 2m18s

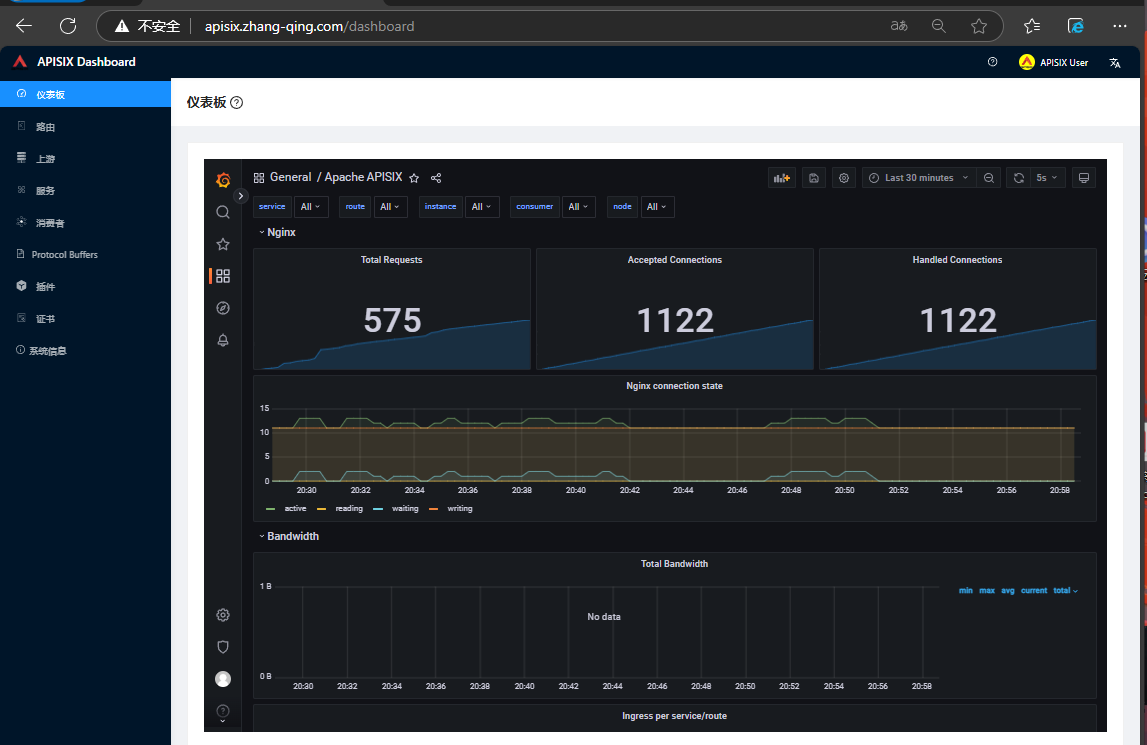

刷新后重新测试,观察到页面正常展示

其他异常情况如下

首页添加出现异常:

修复:https://github.com/apache/apisix-dashboard/issues/2586

修改 apisix-dashboard configmap 文件:

[root@master01 16]# kubectl edit cm apisix-dashboard -ningress-apisix

apiVersion: v1

data:

conf.yaml: |-

conf:

listen:

host: 0.0.0.0

port: 9000

etcd:

prefix: "/apisix"

endpoints:

- apisix-etcd:2379

##增加如下部分

security:

access_control_allow_credentials: true # support using custom cors configration

access_control_allow_headers: "Authorization"

access_control-allow_methods: "*"

x_frame_options: "allow-from *"

content_security_policy: "frame-src *"

...

...

重启 apisix-dashboar:

[root@master01 16]# kubectl rollout restart deploy apisix-dashboard -ningress-apisix

# 查看

[root@master01 16]# kgp -ningress-apisix | grep apisix-dashboard

apisix-dashboard-5997fcf75-m6cnb 1/1 Running 0 2m18s

测试验证:

五、Apache APISIX 中常见的指标(附加)¶

企业内部指标各有差异,以下是 Apache APISIX 中常见的一些关键的指标,为系统的监控和分析提供了丰富的信息。

HTTP 请求和响应指标

-

apisix_http_request_total :记录了通过 APISIX 的 HTTP 请求总数。它可以用来观察系统的整 体流量。

-

apisix_http_request_duration_seconds :HTTP 请求处理时间,有助于识别性能瓶颈。

-

apisix_http_request_size_bytes :HTTP 请求的大小,可以分析请求的数据量。

-

apisix_http_response_size_bytes :HTTP 响应的大小,用于监控响应数据量。

上游服务指标

-

apisix_upstream_latency :上游服务的响应延迟。

-

apisix_upstream_health :上游服务的健康状况。

系统性能指标

-

apisix_node_cpu_usage :APISIX 节点的 CPU 使用率。

-

apisix_node_memory_usage :内存使用情况。

流量指标

- apisix_bandwidth :上行和下行的带宽使用情况。

错误和异常指标

- apisix_http_status_code :HTTP 响应状态码的分布,特别是 4xx 和 5xx 错误,这对于识别潜 在的问题很重要。

其他特定场景

1、缓存指标(如果使用了缓存插件):

-

缓存命中率

-

缓存大小

2、提供扩展插件指标:

- 根据配置的 APISIX 插件,可能会有特定的指标,如限流插件的拒绝请求数等。

Prometheus 告警示例

告警规则可以基于各种条件配置。例如,如果 apisix_http_request_duration_seconds 的平均值超 过预定阈值,Prometheus 可以配置为发送告警通知。

alerting:

alertmanagers:

- static_configs:

- targets:

- localhost:9093

rules:

- alert: HighRequestLatency

expr: avg_over_time(apisix_http_request_duration_seconds[2m]) > 0.5

for: 1m

labels:

severity: "critical"

annotations:

summary: "High request latency on APISIX"

虽然拥有更多且详尽的 Prometheus 指标可以增强监控和告警的维度,使之更加细致,但我们也必须认识到,这些指标的统计会消耗计算资源。更多的指标意味着更高的计算资源需求。同时,Prometheus在拉取这些指标时也会占用更多的带宽和时间。这可能对 API 网关或其他业务系统构成压力,极端情况下甚至可能影响业务请求的正常处理。因此,企业需要根据自己的业务需求和资源状况,寻找一个平衡点。

Apache APISIX 自从 3.0 版本起,对 Prometheus 插件进行了显著优化,引入了独立进程负责指标的统计和拉取工作。这一改进避免了因大量 Prometheus 指标统计而对业务流量产生影响的问题。