一、环境介绍¶

服务器可用资源 2 核 4G 以上

[root@k8s-master01 efk-7.10.2]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master01 Ready <none> 123d v1.23.17

k8s-master02 Ready <none> 123d v1.23.17

k8s-master03 Ready <none> 123d v1.23.17

k8s-node01 Ready <none> 123d v1.23.17

k8s-node02 Ready <none> 123d v1.23.17

二、部署步骤¶

1.下载需要的部署文件

$ git clone https://gitee.com/jeckjohn/k8s.git

$ cd /k8s/efk-7.10.2

2.创建 EFK 所用的命名空间

[root@k8s-master01 efk-7.10.2]# kubectl create -f create-logging-namespace.yaml

3.创建 Elasticsearch 集群

[root@k8s-master01 efk-7.10.2]# kubectl create -f es-service.yaml

[root@k8s-master01 efk-7.10.2]# kubectl create -f es-statefulset.yaml

4.创建 Kibana

[root@k8s-master01 efk-7.10.2]# kubectl create -f kibana-deployment.yaml -f kibana-service.yaml

5.在需要采集的主机上添加一个 NodeSelector,指定需要收集日志的主机(如果收集全部,把以下两行全部注释掉即可)

[root@k8s-master01 efk-7.10.2]# grep "nodeSelector" fluentd-es-ds.yaml -A 3

nodeSelector:

fluentd: "true"

6.之后给需要采集日志的节点打上一个标签,以 k8s-node01 为例:

[root@k8s-master01 efk-7.10.2]# kubectl label node k8s-node01 fluentd=true

[root@k8s-master01 efk-7.10.2]# kubectl get node -l fluentd=true --show-labels

NAME STATUS ROLES AGE VERSION LABELS

k8s-node01 Ready <none> 123d v1.23.17 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,fluentd=true,kubernetes.io/arch=amd64,kubernetes.io/hostname=k8s-node01,kubernetes.io/os=linux,node.kubernetes.io/node=,ssd=true

7.创建 Fluentd

[root@k8s-master01 efk-7.10.2]# kubectl create -f fluentd-es-ds.yaml -f fluentd-es-configmap.yaml

8.其中Fluentd 的 ConfigMap 有个字段需要注意,在 fluentd-es-configmap.yaml 最后有一个 output.conf,如果这里不是部署elasticsearch而是部署其他,需要将其修改。

output.conf: |-

<match **>

@id elasticsearch

@type elasticsearch

...

...

9.确认创建的 Pod 都已经成功启动

[root@k8s-master01 efk-7.10.2]# kubectl get po -n logging

NAME READY STATUS RESTARTS AGE

elasticsearch-logging-0 1/1 Running 0 23m

fluentd-es-v3.1.1-wjkqn 1/1 Running 0 6m51s

kibana-logging-7bf48fb7b4-4zsjr 1/1 Running 0 14m

10.查看 Kibana 暴露的端口号,访问 Kibana

[root@k8s-master01 efk-7.10.2]# kubectl get svc -n logging

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

elasticsearch-logging ClusterIP None <none> 9200/TCP,9300/TCP 35s

kibana-logging NodePort 10.0.102.81 <none> 5601:31853/TCP 24s

三、使用kibana¶

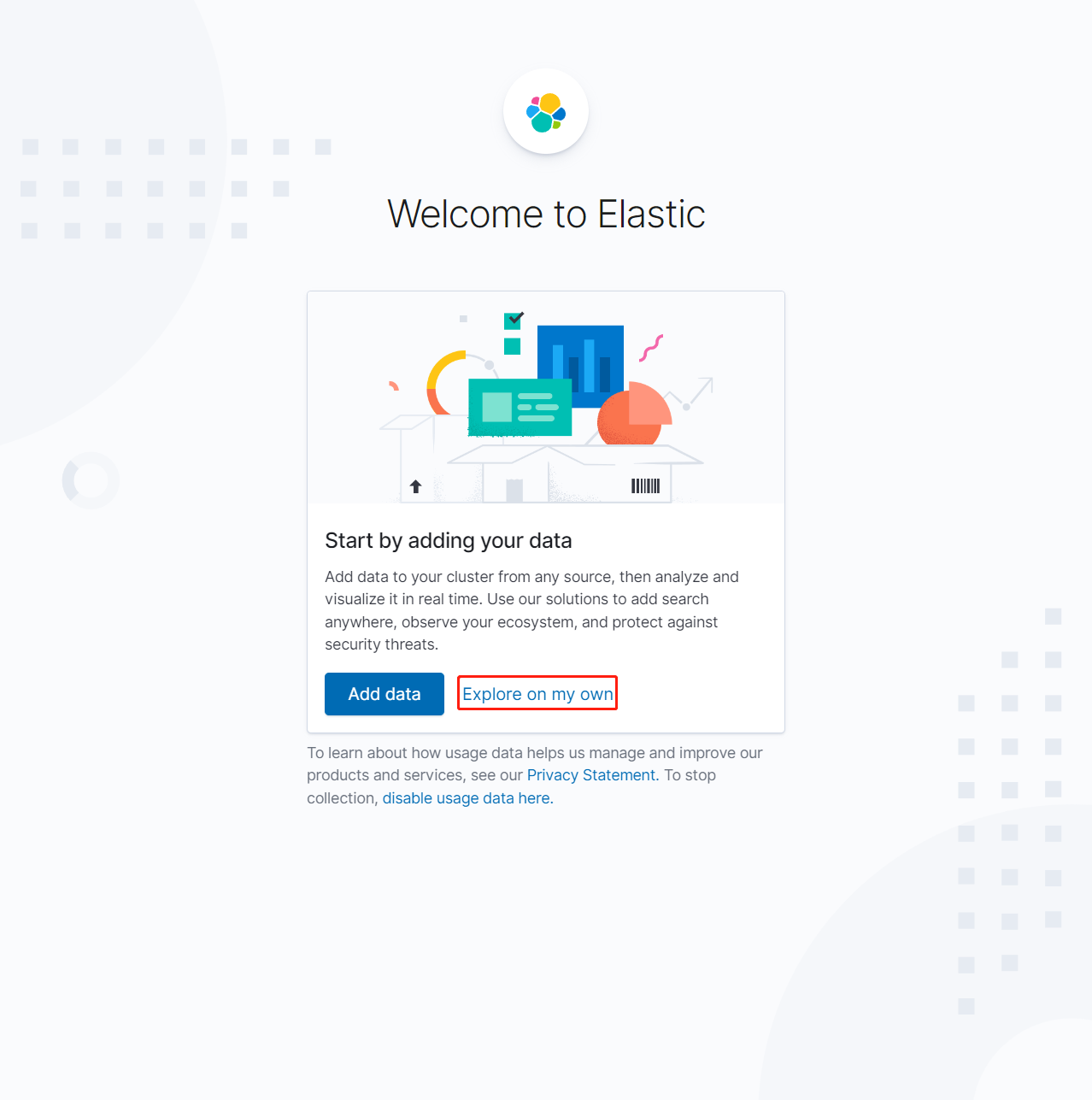

1.使用任意节点IP+31853/kibana/即可访问 Kibana,这里使用http://192.168.1.31:31853/kibana/为例,点击【Explore on my own】

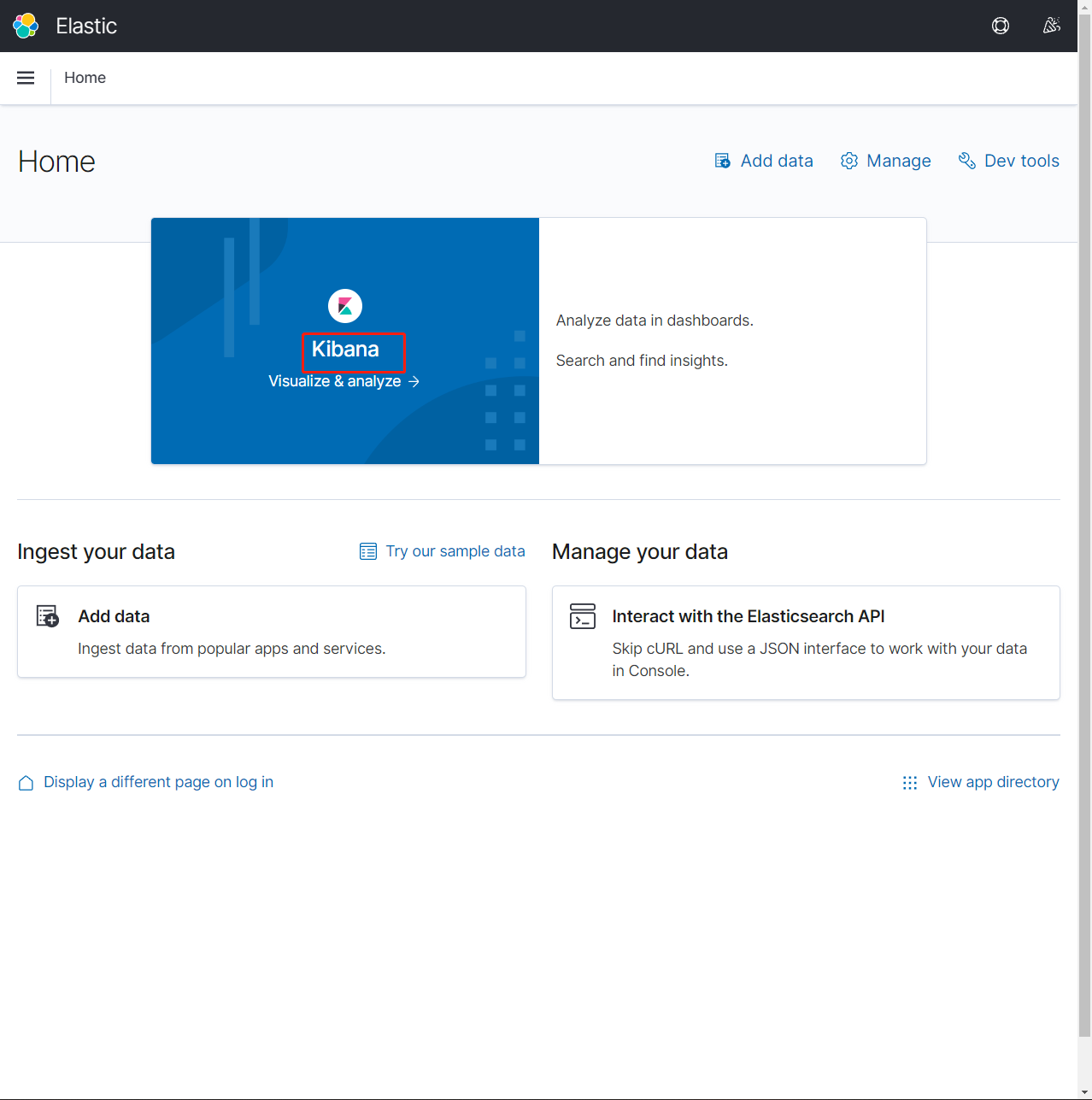

2.点击【Kibana】

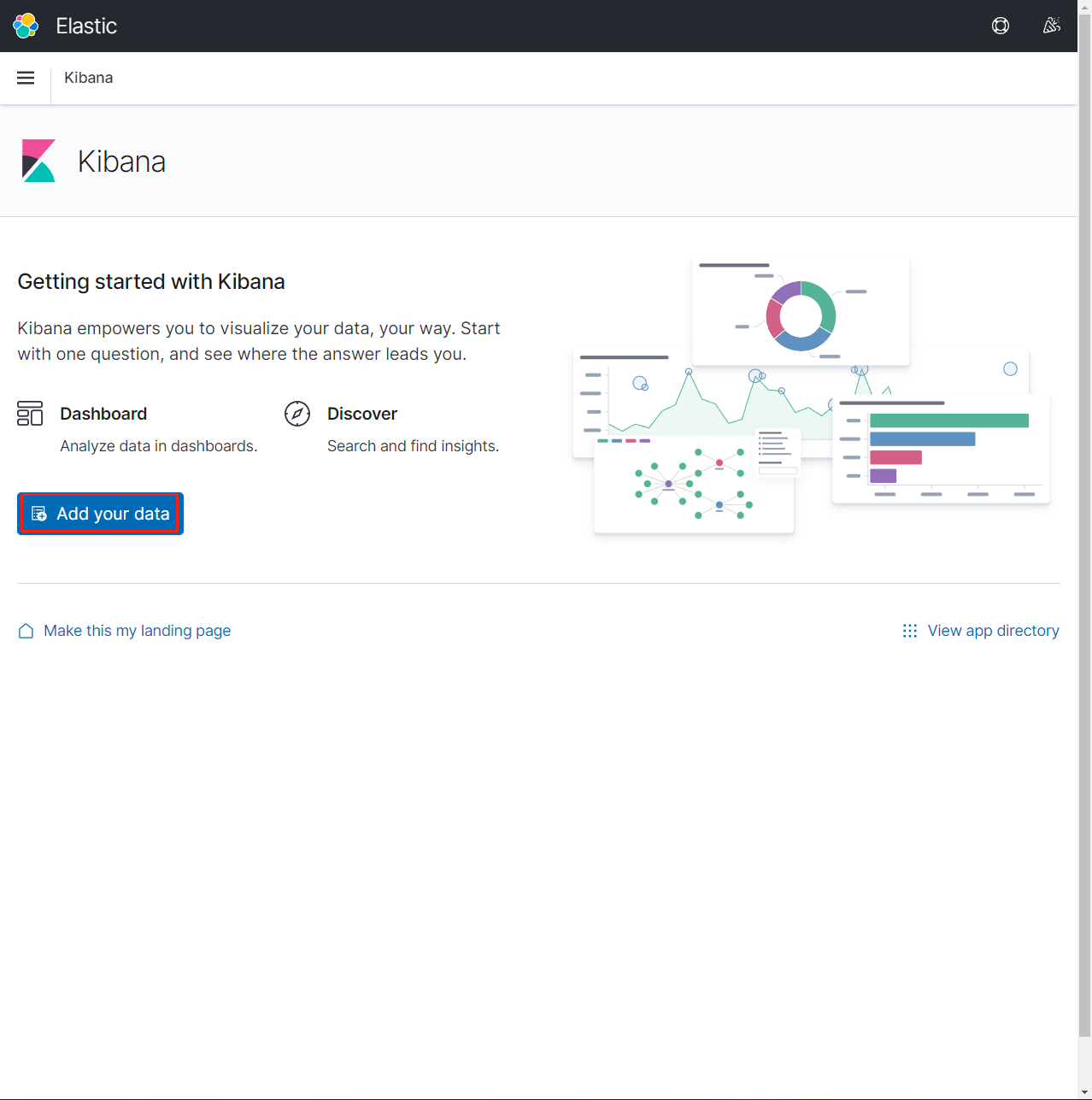

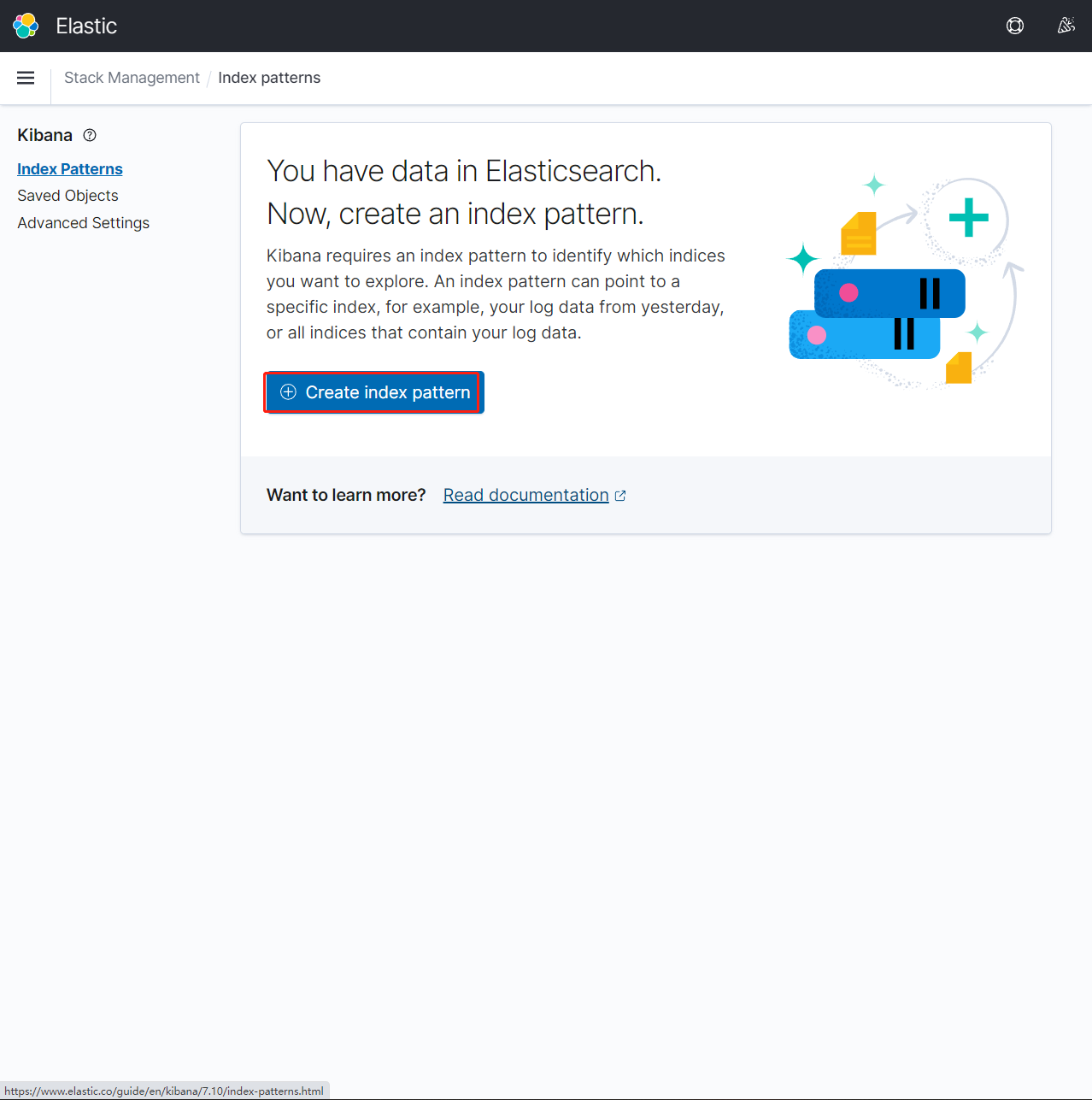

3.依次点击【Add your data】-【Create index pattern】

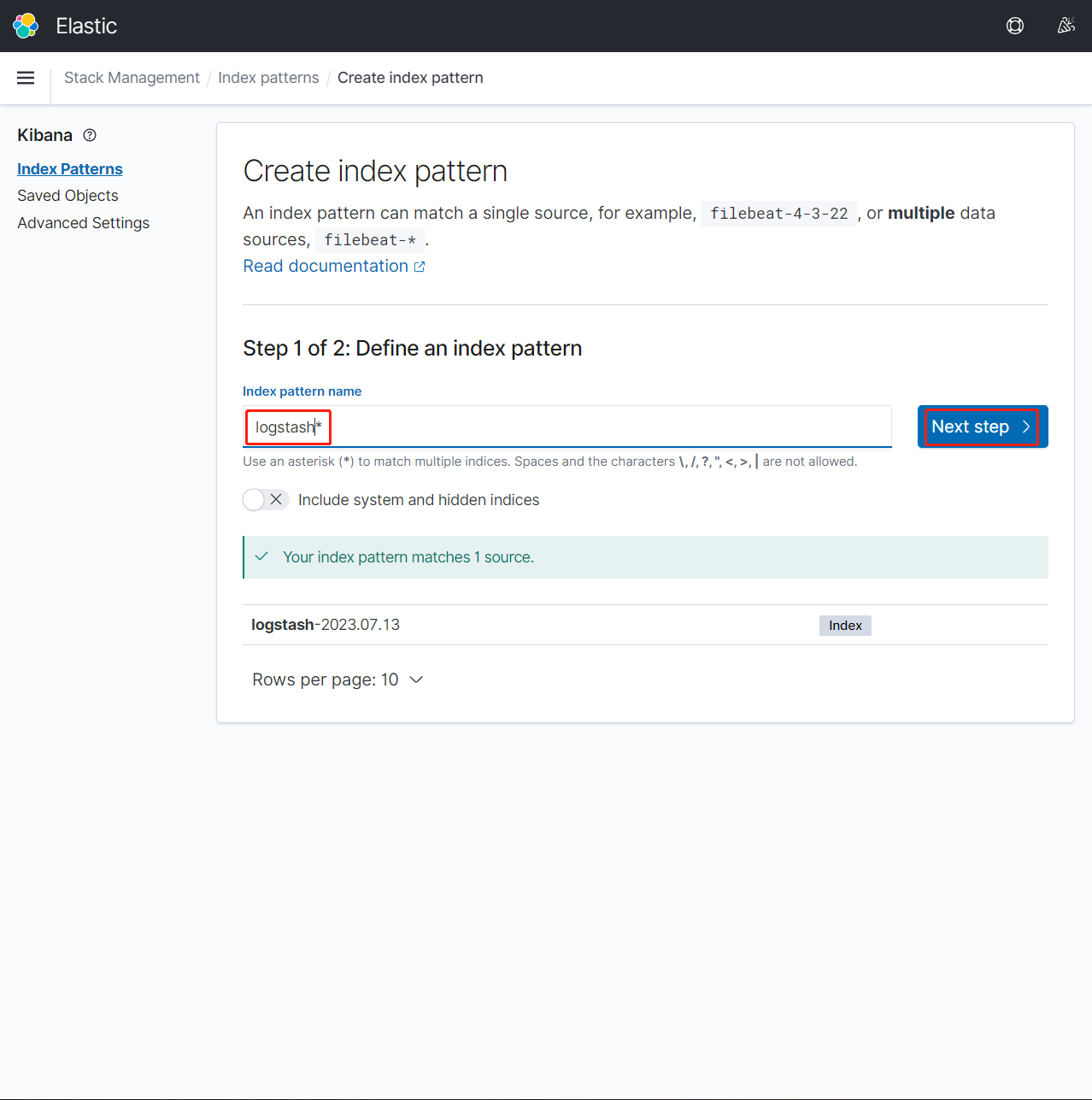

4.在 Index pattern name 输入索引名【 logstash*】,然后点击【 Next Step】。这里需要注意的是输入完索引名后提示【 Your index pattern matches 1 source.】才可以

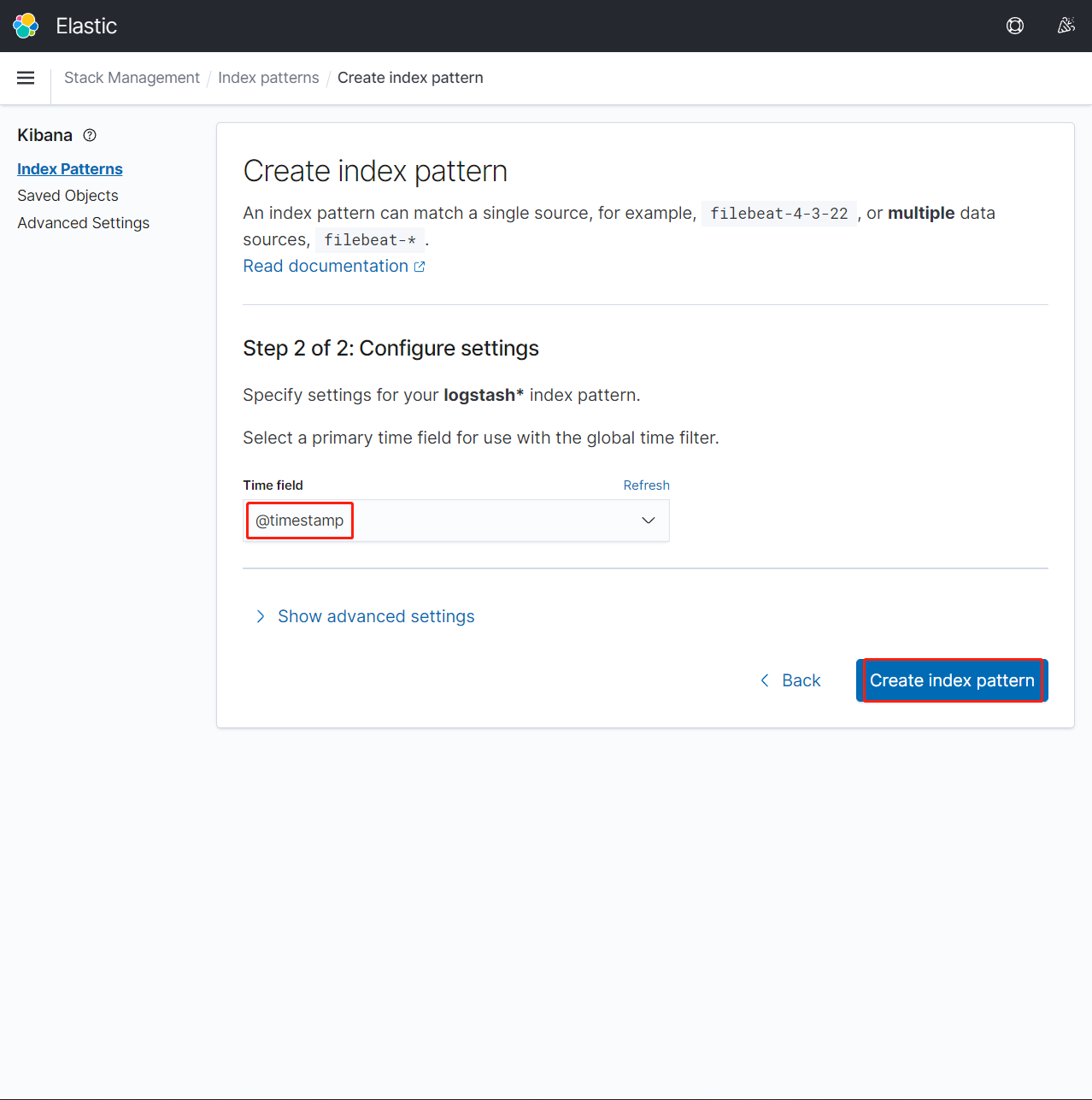

5.Time field处选择【@timestamp】后,点击【Create index pattern】

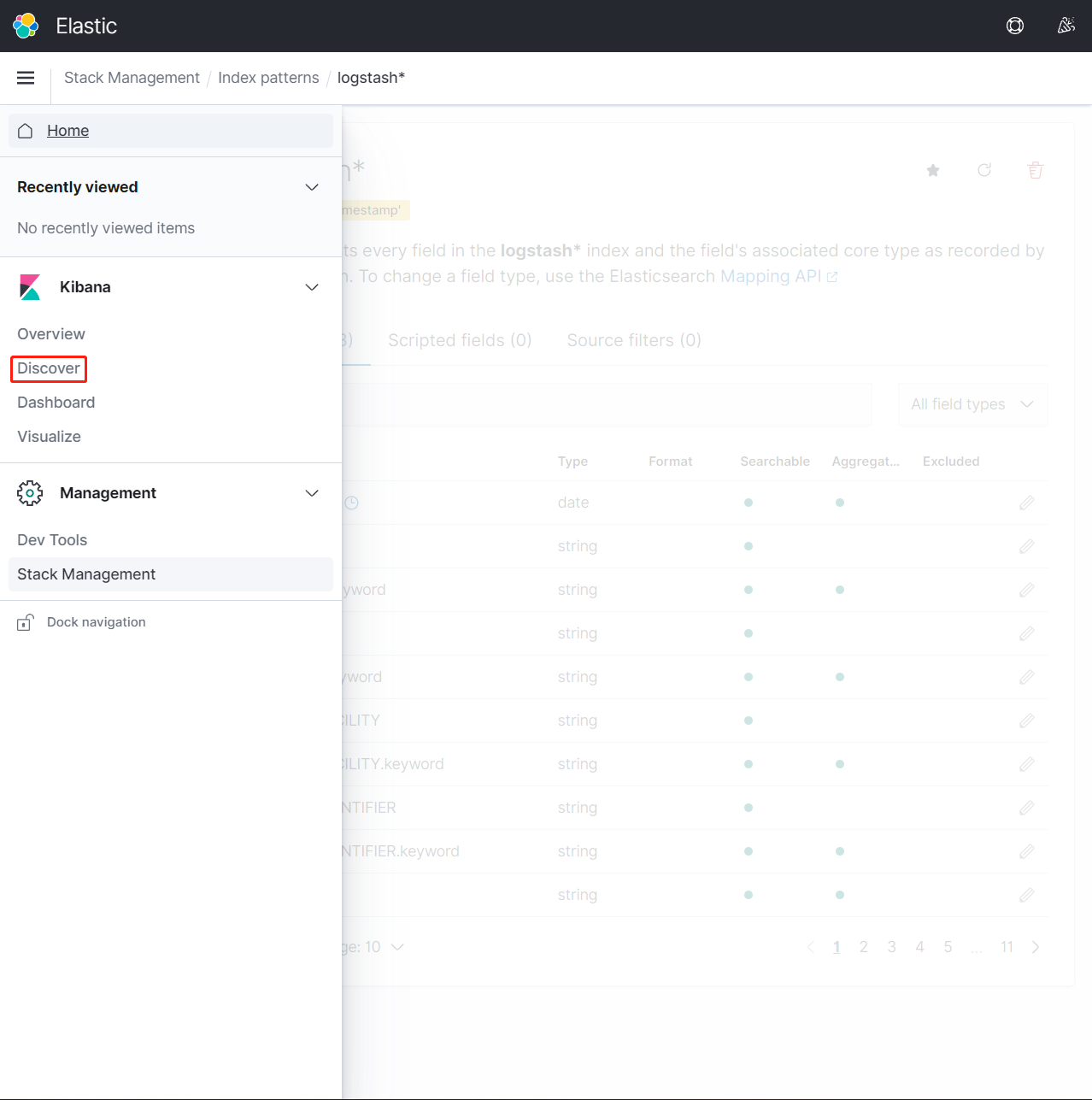

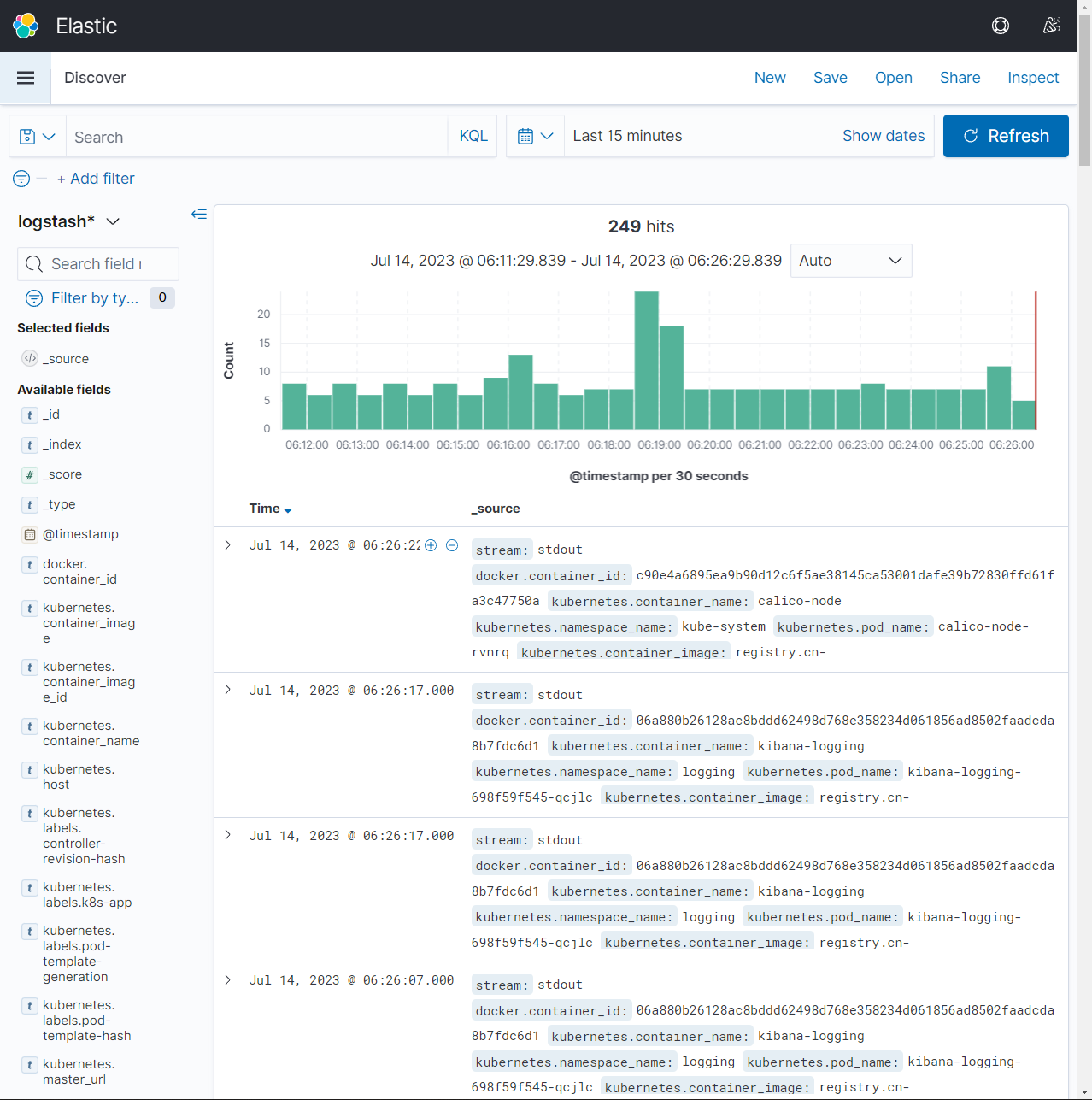

6.点击菜单栏→Discover 即可看到相关日志

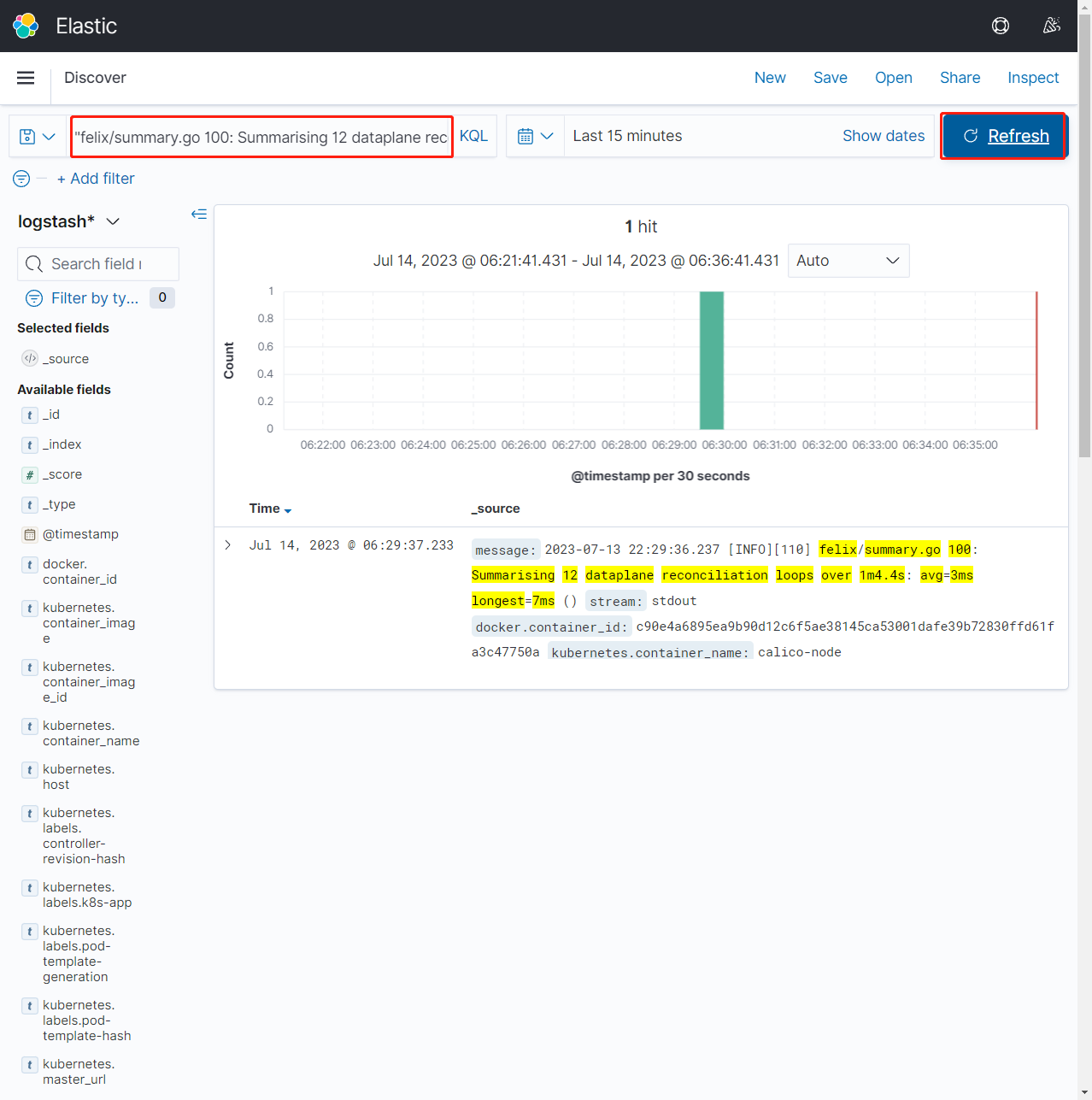

7.在后台查看calico-node-rvnrq日志,验证日志是否被采集到

[root@k8s-master01 efk-7.10.2]# kubectl get po -n kube-system -owide | grep node01

calico-node-rvnrq 1/1 Running 5 (3d11h ago) 124d 192.168.1.34 k8s-node01 <none> <none>

metrics-server-6bf7dcd649-v7gqt 1/1 Running 9 (3d11h ago) 124d 172.17.125.27 k8s-node01 <none> <none>

[root@k8s-master01 efk-7.10.2]# kubectl logs calico-node-rvnrq -n kube-system --tail 5

2023-07-13 22:27:40.836 [INFO][109] monitor-addresses/autodetection_methods.go 103: Using autodetected IPv4 address on interface ens33: 192.168.1.34/24

2023-07-13 22:28:31.850 [INFO][110] felix/summary.go 100: Summarising 12 dataplane reconciliation loops over 1m7.4s: avg=3ms longest=8ms ()

2023-07-13 22:28:40.838 [INFO][109] monitor-addresses/autodetection_methods.go 103: Using autodetected IPv4 address on interface ens33: 192.168.1.34/24

2023-07-13 22:29:36.237 [INFO][110] felix/summary.go 100: Summarising 12 dataplane reconciliation loops over 1m4.4s: avg=3ms longest=7ms ()

2023-07-13 22:29:40.839 [INFO][109] monitor-addresses/autodetection_methods.go 103: Using autodetected IPv4 address on interface ens33: 192.168.1.34/24

8.复制以下内容到Search框后,点击【Update】,在控制台上进行查看,观察到日志已经采集成功。这里需要注意:下面的内容复制到Search框需要添加""

felix/summary.go 100: Summarising 12 dataplane reconciliation loops over 1m4.4s: avg=3ms longest=7ms

四、清理环境¶

执行以下操作进行环境清理:

[root@k8s-master01 ~]# cd /root/k8s/efk-7.10.2

[root@k8s-master01 efk-7.10.2]# kubectl delete -f fluentd-es-ds.yaml -f fluentd-es-configmap.yaml

[root@k8s-master01 efk-7.10.2]# kubectl delete -f kibana-deployment.yaml -f kibana-service.yaml

[root@k8s-master01 efk-7.10.2]# kubectl delete -f es-service.yaml

[root@k8s-master01 efk-7.10.2]# kubectl delete -f es-statefulset.yaml

[root@k8s-master01 efk-7.10.2]# kubectl delete -f create-logging-namespace.yaml

[root@k8s-master01 efk-7.10.2]# kubectl label node k8s-node01 fluentd-