整理 KEDA 的 Helm 在线安装、离线安装和卸载流程,为后续弹性伸缩实战准备运行环境。 以周期性扩缩容为例,演示 KEDA 如何基于定时规则对工作负载进行弹性伸缩。 围绕 RabbitMQ 队列消息场景,演示 KEDA 触发器配置、测试资源准备和伸缩验证流程。 通过 MySQL 数据驱动场景,展示 KEDA 如何基于数据库指标进行工作负载伸缩。 最后通过 ScaledJob 场景完成任务型处理实战,并附带整理整体实验环境的清理流程。

一、KEDA安装和使用¶

官方资料:https://keda.sh/docs/2.16/deploy/

注意事项:KEDA 需要 Kubernetes 集群版本 1.27 及更高版本

1.1 KEDA安装¶

1.1.1 Helm在线安装¶

1、添加KEDA的Helm源

#添加helm源

[root@k8s-master01 ~]# helm repo add kedacore https://kedacore.github.io/charts

#更新helm源

[root@k8s-master01 ~]# helm repo update

Hang tight while we grab the latest from your chart repositories...

...Successfully got an update from the "ot-helm" chart repository

...Successfully got an update from the "kedacore" chart repository

Update Complete. ⎈Happy Helming!⎈

2、安装KEDA

[root@k8s-master01 ~]# helm install keda kedacore/keda --namespace keda --create-namespace

...

...

Learn more about KEDA:

- Documentation: https://keda.sh/

- Support: https://keda.sh/support/

- File an issue: https://github.com/kedacore/keda/issues/new/choose

3、查看服务状态

[root@k8s-master01 ~]# kgp -n keda

2.5.1.2 Helm离线安装¶

1、添加KEDA的Helm源

#添加helm源

[root@k8s-master01 ~]# helm repo add kedacore https://kedacore.github.io/charts

#更新helm源

[root@k8s-master01 ~]# helm repo update

Hang tight while we grab the latest from your chart repositories...

...Successfully got an update from the "ot-helm" chart repository

...Successfully got an update from the "kedacore" chart repository

Update Complete. ⎈Happy Helming!⎈

2、下载并解压keda包

#下载keda包

[root@k8s-master01 ~]# helm pull kedacore/keda --version 2.16.1

#解压keda包

[root@k8s-master01 ~]# tar xf keda-2.16.1.tgz

3、创建ns

[root@k8s-master01 ~]# kubectl create ns keda

4、修改values.yaml文件

[root@k8s-master01 ~]# vim /root/keda/values.yaml

...

...

#修改第13行内容

13 registry: registry.cn-hangzhou.aliyuncs.com

#修改第15行内容

15 repository: github_images1024/keda

#修改第17行内容

17 tag: "2.16.1"

#修改第20行内容

20 registry: registry.cn-hangzhou.aliyuncs.com

#修改第22行内容

22 repository: github_images1024/keda-metrics-apiserver

#修改第24行内容

24 tag: "2.16.1"

#修改第27行内容

27 registry: registry.cn-hangzhou.aliyuncs.com

#修改第29行内容

29 repository: github_images1024/keda-admission-webhooks

#修改第31行内容

31 tag: "2.16.1"

完整values.yaml文件

[root@k8s-master01 ~]# egrep -v "^#|^$|#" /root/keda/values.yaml

global:

image:

registry: null

image:

keda:

registry: registry.cn-hangzhou.aliyuncs.com

repository: github_images1024/keda

tag: "2.16.1"

metricsApiServer:

registry: registry.cn-hangzhou.aliyuncs.com

repository: github_images1024/keda-metrics-apiserver

tag: "2.16.1"

webhooks:

registry: registry.cn-hangzhou.aliyuncs.com

repository: github_images1024/keda-admission-webhooks

tag: "2.16.1"

pullPolicy: Always

clusterName: kubernetes-default

clusterDomain: cluster.local

crds:

install: true

additionalAnnotations:

{}

watchNamespace: ""

imagePullSecrets: []

networkPolicy:

enabled: false

flavor: "cilium"

cilium:

operator:

extraEgressRules: []

operator:

name: keda-operator

revisionHistoryLimit: 10

replicaCount: 1

disableCompression: true

affinity: {}

extraContainers: []

extraInitContainers: []

livenessProbe:

initialDelaySeconds: 25

periodSeconds: 10

timeoutSeconds: 1

failureThreshold: 3

successThreshold: 1

readinessProbe:

initialDelaySeconds: 20

periodSeconds: 3

timeoutSeconds: 1

failureThreshold: 3

successThreshold: 1

metricsServer:

revisionHistoryLimit: 10

replicaCount: 1

disableCompression: true

dnsPolicy: ClusterFirst

useHostNetwork: false

affinity: {}

livenessProbe:

initialDelaySeconds: 5

periodSeconds: 10

timeoutSeconds: 1

failureThreshold: 3

successThreshold: 1

readinessProbe:

initialDelaySeconds: 5

periodSeconds: 3

timeoutSeconds: 1

failureThreshold: 3

successThreshold: 1

webhooks:

enabled: true

port: ""

healthProbePort: 8081

livenessProbe:

initialDelaySeconds: 25

periodSeconds: 10

timeoutSeconds: 1

failureThreshold: 3

successThreshold: 1

readinessProbe:

initialDelaySeconds: 20

periodSeconds: 3

timeoutSeconds: 1

failureThreshold: 3

successThreshold: 1

useHostNetwork: false

name: keda-admission-webhooks

revisionHistoryLimit: 10

replicaCount: 1

affinity: {}

failurePolicy: Ignore

upgradeStrategy:

operator: {}

metricsApiServer: {}

webhooks: {}

podDisruptionBudget:

operator: {}

metricServer: {}

webhooks: {}

additionalLabels:

{}

additionalAnnotations:

{}

podAnnotations:

keda: {}

metricsAdapter: {}

webhooks: {}

podLabels:

keda: {}

metricsAdapter: {}

webhooks: {}

rbac:

create: true

aggregateToDefaultRoles: false

enabledCustomScaledRefKinds: true

controlPlaneServiceAccountsNamespace: kube-system

scaledRefKinds:

- apiGroup: "*"

kind: "*"

serviceAccount:

operator:

create: true

name: keda-operator

automountServiceAccountToken: true

annotations: {}

metricServer:

create: true

name: keda-metrics-server

automountServiceAccountToken: true

annotations: {}

webhooks:

create: true

name: keda-webhook

automountServiceAccountToken: true

annotations: {}

podIdentity:

azureWorkload:

enabled: false

clientId: ""

tenantId: ""

tokenExpiration: 3600

aws:

irsa:

enabled: false

audience: "sts.amazonaws.com"

roleArn: ""

stsRegionalEndpoints: "true"

tokenExpiration: 86400

gcp:

enabled: false

gcpIAMServiceAccount: ""

grpcTLSCertsSecret: ""

hashiCorpVaultTLS: ""

logging:

operator:

level: info

format: console

timeEncoding: rfc3339

stackTracesEnabled: false

metricServer:

level: 0

stderrthreshold: ERROR

webhooks:

level: info

format: console

timeEncoding: rfc3339

securityContext:

operator:

capabilities:

drop:

- ALL

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

seccompProfile:

type: RuntimeDefault

metricServer:

capabilities:

drop:

- ALL

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

seccompProfile:

type: RuntimeDefault

webhooks:

capabilities:

drop:

- ALL

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

seccompProfile:

type: RuntimeDefault

podSecurityContext:

operator:

runAsNonRoot: true

metricServer:

runAsNonRoot: true

webhooks:

runAsNonRoot: true

service:

type: ClusterIP

portHttps: 443

portHttpsTarget: 6443

annotations: {}

resources:

operator:

limits:

cpu: 1

memory: 1000Mi

requests:

cpu: 100m

memory: 100Mi

metricServer:

limits:

cpu: 1

memory: 1000Mi

requests:

cpu: 100m

memory: 100Mi

webhooks:

limits:

cpu: 1

memory: 1000Mi

requests:

cpu: 100m

memory: 100Mi

nodeSelector: {}

tolerations: []

topologySpreadConstraints:

operator: []

metricsServer: []

webhooks: []

affinity: {}

priorityClassName: ""

http:

timeout: 3000

keepAlive:

enabled: true

minTlsVersion: TLS12

profiling:

operator:

enabled: false

port: 8082

metricsServer:

enabled: false

port: 8083

webhooks:

enabled: false

port: 8084

extraArgs:

keda: {}

metricsAdapter: {}

env: []

volumes:

keda:

extraVolumes: []

extraVolumeMounts: []

metricsApiServer:

extraVolumes: []

extraVolumeMounts: []

webhooks:

extraVolumes: []

extraVolumeMounts: []

prometheus:

metricServer:

enabled: false

port: 8080

portName: metrics

serviceMonitor:

enabled: false

jobLabel: ""

targetLabels: []

podTargetLabels: []

port: metrics

targetPort: ""

interval: ""

scrapeTimeout: ""

relabellings: []

relabelings: []

metricRelabelings: []

additionalLabels: {}

scheme: http

tlsConfig: {}

podMonitor:

enabled: false

interval: ""

scrapeTimeout: ""

namespace: ""

additionalLabels: {}

relabelings: []

metricRelabelings: []

operator:

enabled: false

port: 8080

serviceMonitor:

enabled: false

jobLabel: ""

targetLabels: []

podTargetLabels: []

port: metrics

targetPort: ""

interval: ""

scrapeTimeout: ""

relabellings: []

relabelings: []

metricRelabelings: []

additionalLabels: {}

scheme: http

tlsConfig: {}

podMonitor:

enabled: false

interval: ""

scrapeTimeout: ""

namespace: ""

additionalLabels: {}

relabelings: []

metricRelabelings: []

prometheusRules:

enabled: false

namespace: ""

additionalLabels: {}

alerts:

[]

webhooks:

enabled: false

port: 8080

serviceMonitor:

enabled: false

jobLabel: ""

targetLabels: []

podTargetLabels: []

port: metrics

targetPort: ""

interval: ""

scrapeTimeout: ""

relabellings: []

relabelings: []

metricRelabelings: []

additionalLabels: {}

scheme: http

tlsConfig: {}

prometheusRules:

enabled: false

namespace: ""

additionalLabels: {}

alerts: []

opentelemetry:

collector:

uri: ""

operator:

enabled: false

certificates:

autoGenerated: true

secretName: kedaorg-certs

mountPath: /certs

certManager:

enabled: false

generateCA: true

caSecretName: "kedaorg-ca"

secretTemplate: {}

issuer:

generate: true

name: foo-org-ca

kind: ClusterIssuer

group: cert-manager.io

operator:

permissions:

metricServer:

restrict:

secret: false

operator:

restrict:

secret: false

namesAllowList: []

extraObjects: []

asciiArt: true

customManagedBy: ""

5、安装keda

[root@k8s-master01 keda]# helm install keda . -n keda

查看资源

#查看pod

[root@k8s-master01 keda]# kgp -n keda

NAME READY STATUS RESTARTS AGE

keda-admission-webhooks-9664c747b-rxk88 1/1 Running 0 46s

keda-operator-848cf7589c-swfzd 1/1 Running 1 (42s ago) 46s

keda-operator-metrics-apiserver-7bc7d5755b-zdkbm 1/1 Running 0 46s

#查看crd

[root@k8s-master01 ~]# kg crd | grep keda

cloudeventsources.eventing.keda.sh 2025-04-03T03:49:23Z

clustercloudeventsources.eventing.keda.sh 2025-04-03T03:49:23Z

clustertriggerauthentications.keda.sh 2025-04-03T03:49:23Z

scaledjobs.keda.sh 2025-04-03T03:49:23Z

scaledobjects.keda.sh 2025-04-03T03:49:23Z

triggerauthentications.keda.sh 2025-04-03T03:49:23Z

#查看api-resources

[root@k8s-master01 ~]# kubectl api-resources | grep keda

cloudeventsources eventing.keda.sh/v1alpha1 true CloudEventSource

clustercloudeventsources eventing.keda.sh/v1alpha1 false ClusterCloudEventSource

clustertriggerauthentications cta,clustertriggerauth keda.sh/v1alpha1 false ClusterTriggerAuthentication

scaledjobs sj keda.sh/v1alpha1 true ScaledJob

scaledobjects so keda.sh/v1alpha1 true ScaledObject

triggerauthentications ta,triggerauth keda.sh/v1alpha1 true TriggerAuthentication

2.5.2 KEDA卸载¶

如果要从集群中删除 KEDA,首先需要删除您创建的所有 ScaledObject 和 ScaledJobs。完成后,可以卸载 Helm :

#删除相关资源

kubectl delete $(kubectl get scaledobjects.keda.sh,scaledjobs.keda.sh -A \

-o jsonpath='{"-n "}{.items[*].metadata.namespace}{" "}{.items[*].kind}{"/"}{.items[*].metadata.name}{"\n"}')

#卸载keda

helm uninstall keda -n keda

注意:如果在未先删除已创建的任何 ScaledObject 或 ScaledJob 资源的情况下卸载 Helm chart,则它们将成为孤立资源。在这种情况下,您需要修补资源以删除其终结器。完成此作后,它们应自动删除:

for i in $(kubectl get scaledobjects -A \

-o jsonpath='{"-n "}{.items[*].metadata.namespace}{" "}{.items[*].kind}{"/"}{.items[*].metadata.name}{"\n"}');

do kubectl patch $i -p '{"metadata":{"finalizers":null}}' --type=merge

done

for i in $(kubectl get scaledjobs -A \

-o jsonpath='{"-n "}{.items[*].metadata.namespace}{" "}{.items[*].kind}{"/"}{.items[*].metadata.name}{"\n"}');

do kubectl patch $i -p '{"metadata":{"finalizers":null}}' --type=merge

done

2.6 KEDA弹性伸缩实战¶

二、KEDA弹性伸缩实战¶

2.1 周期性定时扩缩容¶

官方链接:https://keda.sh/docs/2.16/scalers/cron/

KEDA支持周期性弹性收缩服务,且支持缩容至0。假设有个服务只有每天早上7-9点属于业务高峰,就可以利用KEDA实现在7-9点扩展服务,除此之外的时间在缩减副本,以节省资源。

1.1 创建测试资源¶

1.定义一个yaml文件

## 生成模板文件

[root@k8s-master01 ~]# kubectl create deploy nginx-server --image=registry.cn-hangzhou.aliyuncs.com/zq-demo/nginx:1.14.2 --dry-run=client -oyaml > nginx-server.yaml

## 重新编辑模板文件

[root@k8s-master01 ~]# vim nginx-server.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx-server

name: nginx-server

spec:

replicas: 1

selector:

matchLabels:

app: nginx-server

strategy: {}

template:

metadata:

labels:

app: nginx-server

spec:

containers:

- image: registry.cn-hangzhou.aliyuncs.com/zq-demo/nginx:1.14.2

name: nginx

resources:

requests:

cpu: 10m

memory: 128Mi

2.创建Deployment

[root@k8s-master01 ~]# k create -f nginx-server.yaml

3.查看pod

[root@k8s-master01 ~]# k get po

NAME READY STATUS RESTARTS AGE

nginx-server-5f44c4b986-4rvjd 1/1 Running 0 14s

2.6.1.2 开始实战¶

1、创建Cron类型的ScaledObject

#编写yaml文件

[root@k8s-master01 ~]# vim cron.yaml

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: cron-scaledobject

namespace: default

spec:

scaleTargetRef:

name: nginx-server

minReplicaCount: 0

cooldownPeriod: 300

triggers:

- type: cron

metadata:

timezone: Asia/Shanghai

start: 35 12 * * *

end: 40 12 * * *

desiredReplicas: "10"

#应用yaml文件

[root@k8s-master01 ~]# kaf cron.yaml

2、查看资源

#查看之前创建的deploy副本数自动设置为0

[root@k8s-master01 ~]# kg deploy

NAME READY UP-TO-DATE AVAILABLE AGE

nginx-server 0/0 0 0 9m43s

#查看ScaledObject资源

[root@k8s-master01 ~]# kg so

NAME SCALETARGETKIND SCALETARGETNAME MIN MAX READY ACTIVE FALLBACK PAUSED TRIGGERS AUTHENTICATIONS AGE

cron-scaledobject apps/v1.Deployment nginx-server 0 True True False Unknown 4m43s

#查看hpa

[root@k8s-master01 ~]# kg hpa

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE

keda-hpa-cron-scaledobject Deployment/nginx-server 1/1 (avg) 1 100 10 5m24s

#等待12点35后进行扩容,观察到副本已经被扩成10个

[root@k8s-master01 ~]# kgp

NAME READY STATUS RESTARTS AGE

nginx-server-5f44c4b986-4pnmv 1/1 Running 0 77s

nginx-server-5f44c4b986-5rlc5 1/1 Running 0 77s

nginx-server-5f44c4b986-729fm 1/1 Running 0 93s

nginx-server-5f44c4b986-76ls4 1/1 Running 0 77s

nginx-server-5f44c4b986-87j9m 1/1 Running 0 92s

nginx-server-5f44c4b986-9bww2 1/1 Running 0 92s

nginx-server-5f44c4b986-gz7pj 1/1 Running 0 62s

nginx-server-5f44c4b986-hg76z 1/1 Running 0 77s

nginx-server-5f44c4b986-jlwjz 1/1 Running 0 92s

nginx-server-5f44c4b986-nkmw6 1/1 Running 0 62s

2.6.1.3 环境清理¶

k delete -f nginx-server.yaml -f cron.yaml

2.2 基于RabbitMQ消息队列的弹性伸缩配置¶

官方链接:https://keda.sh/docs/2.16/scalers/rabbitmq-queue/

KEDA支持基于消息队列的弹性伸缩,比如基于RabbitMO、Kafka、Redis队列进行扩缩容,以便更快的处理。

1.1 创建测试资源¶

1.定义一个yaml文件创建一个RabbitMQ

#创建deployment模板文件

[root@k8s-master01 ~]# kubectl create deploy rabbitmq --image=registry.cn-beijing.aliyuncs.com/dotbalo/rabbitmq:4.0.5-management-alpine --dry-run=client -oyaml > rabbitmq-deploy.yaml

#重新定义deployment模板文件

[root@k8s-master01 ~]# vim rabbitmq-deploy.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

annotations: {}

labels:

app: rabbitmq

name: rabbitmq

spec:

replicas: 1

selector:

matchLabels:

app: rabbitmq

strategy:

rollingUpdate:

maxSurge: 1

maxUnavailable: 0

type: RollingUpdate

template:

metadata:

labels:

app: rabbitmq

spec:

containers:

- env:

- name: TZ

value: Asia/Shanghai

- name: LANG

value: C.UTF-8

- name: RABBITMQ_DEFAULT_USER

value: user

- name: RABBITMQ_DEFAULT_PASS

value: password

image: registry.cn-beijing.aliyuncs.com/dotbalo/rabbitmq:4.0.5-management-alpine

name: rabbitmq

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 2

initialDelaySeconds: 30

periodSeconds: 10

successThreshold: 1

tcpSocket:

port: 5672

timeoutSeconds: 2

name: rabbitmq

ports:

- containerPort: 5672

name: web

protocol: TCP

readinessProbe:

failureThreshold: 2

initialDelaySeconds: 30

periodSeconds: 10

successThreshold: 1

tcpSocket:

port: 5672

timeoutSeconds: 2

#定义一个Service文件

[root@k8s-master01 ~]# vim rabbitmq-svc.yaml

apiVersion: v1

kind: Service

metadata:

name: rabbitmq

spec:

selector:

app: rabbitmq

sessionAffinity: None

type: NodePort

ports:

- name: web

port: 5672

targetPort: 5672

protocol: TCP

nodePort: 30672

- name: http

port: 15672

targetPort: 15672

protocol: TCP

nodePort: 31672

2.创建Deployment

[root@k8s-master01 ~]# kaf rabbitmq-deploy.yaml

[root@k8s-master01 ~]# kaf rabbitmq-svc.yaml

3.查看资源

#查看pod

[root@k8s-master01 ~]# kgp | grep rabbitmq

rabbitmq-5c445844c-5qpff 1/1 Running 0 5m25s

#查看svc

[root@k8s-master01 ~]# kg svc | grep rabbitmq

rabbitmq NodePort 10.109.228.249 <none> 5672:30672/TCP,15672:31672/TCP 2m15

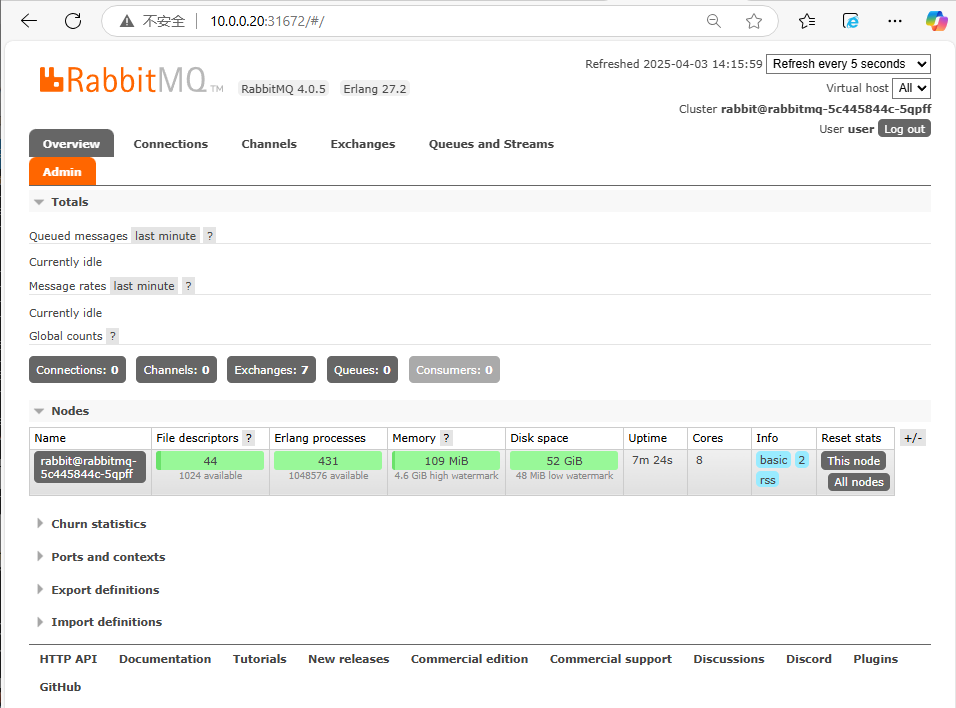

4.打开浏览器中输入http://10.0.0.20:31672进行登录测试,账号为user,密码为password

5.使用一个Job模拟生产者生产消息

创建一个job

#定义yaml文件

[root@k8s-master01 ~]# vim rabbitmq-publish-job.yaml

apiVersion: batch/v1

kind: Job

metadata:

name: rabbitmq-publish

spec:

backoffLimit: 4

template:

spec:

containers:

- name: rabbitmq-client

command:

- "send"

- "amqp://user:password@rabbitmq.default.svc.cluster.local:5672"

- "10"

image: registry.cn-hangzhou.aliyuncs.com/github_images1024/rabbitmq-publish:v1.0

imagePullPolicy: IfNotPresent

restartPolicy: Never

#应用

[root@k8s-master01 ~]# kaf rabbitmq-publish-job.yaml

#查看资源

[root@k8s-master01 ~]# kgp

NAME READY STATUS RESTARTS AGE

rabbitmq-5c445844c-5qpff 1/1 Running 0 38m

rabbitmq-publish-g2bmx 0/1 Completed 0 3m55s

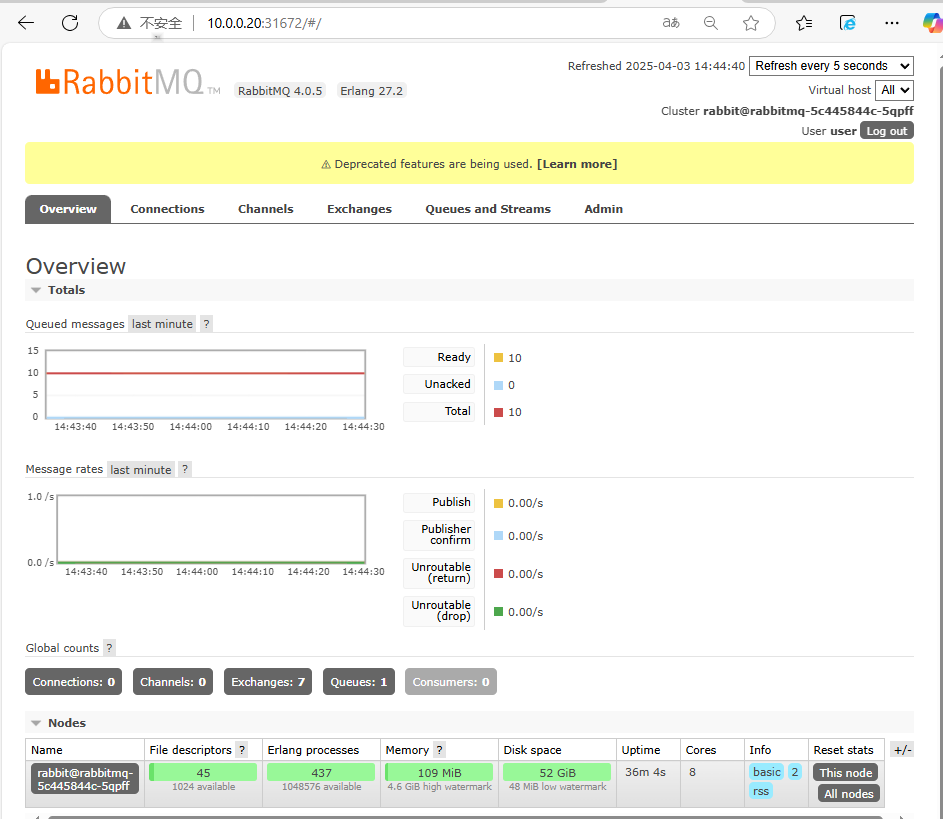

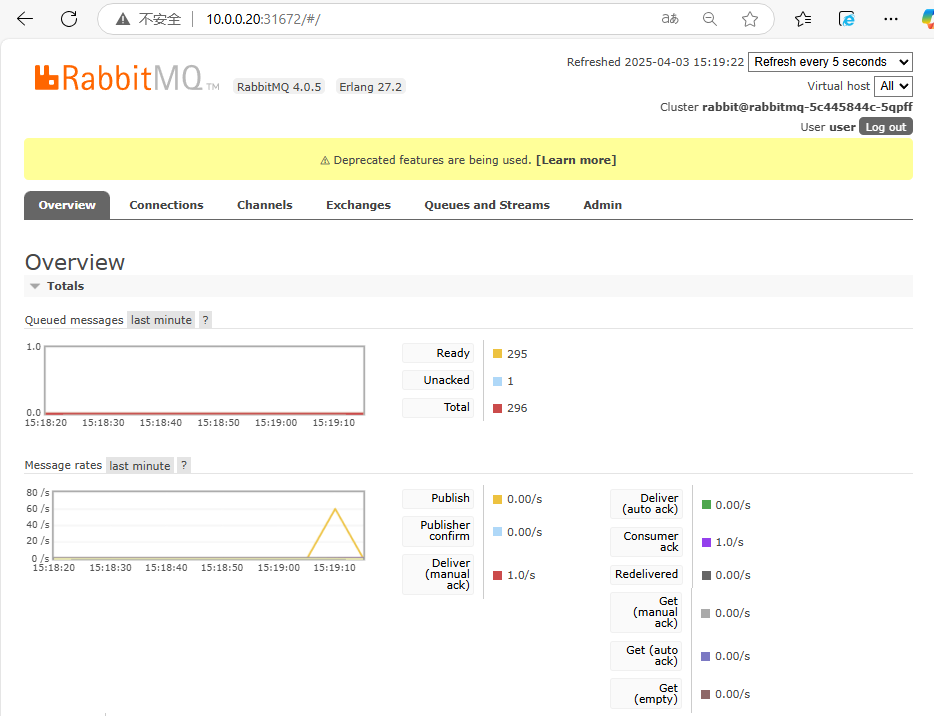

打开浏览器中输入http://10.0.0.20:31672进行登录,查看到生产消息数量为10

6.使用一个Job模拟消费者来消费消息

创建一个job

#创建模板文件

[root@k8s-master01 ~]# k create deploy rabbitmq-consumer --image=registry.cn-hangzhou.aliyuncs.com/github_images1024/rabbitmq-consumer:v1.0 --dry-run=client -oyaml > rabbitmq-consumer-deploy.yaml

#定义yaml文件

[root@k8s-master01 ~]# vim rabbitmq-consumer-deploy.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: rabbitmq-consumer

name: rabbitmq-consumer

spec:

replicas: 1

selector:

matchLabels:

app: rabbitmq-consumer

strategy: {}

template:

metadata:

labels:

app: rabbitmq-consumer

spec:

containers:

- image: registry.cn-hangzhou.aliyuncs.com/github_images1024/rabbitmq-consumer:v1.0

name: rabbitmq-consumer

command:

- receive

args:

- "amqp://user:password@rabbitmq.default.svc.cluster.local:5672"

#应用

[root@k8s-master01 ~]# kaf rabbitmq-consumer-deploy.yaml

#查看资源

[root@k8s-master01 ~]# kgp | grep rabbitmq-consumer

rabbitmq-consumer-df797d958-28lpw 1/1 Running 0 96s

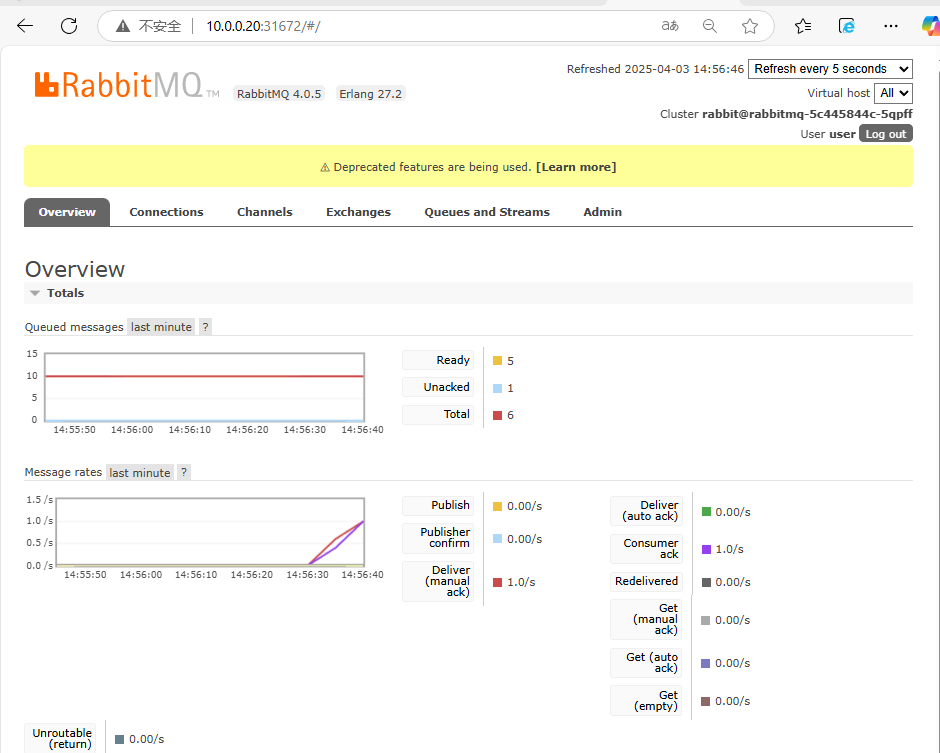

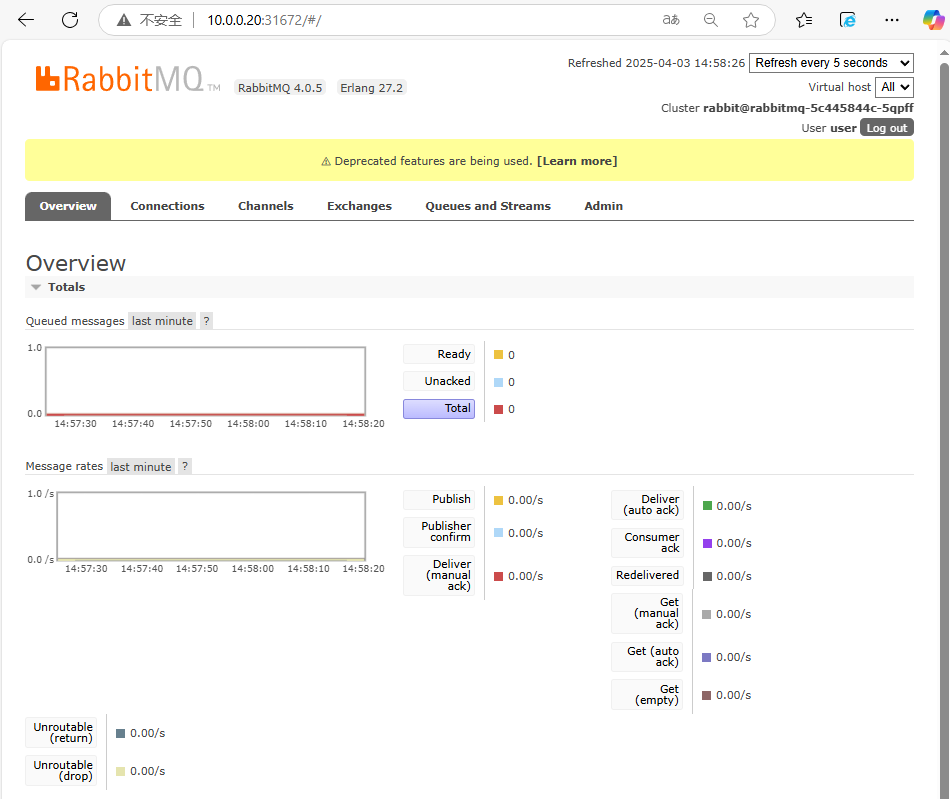

打开浏览器中输入http://10.0.0.20:31672进行登录,查看到生产消息数量逐渐减少,最后变为0

2.6.2.2 开始实战¶

1、创建TriggerAuthentication和Secret

#定义资源文件

[root@k8s-master01 ~]# vim rabbitmq-ta.yaml

apiVersion: v1

kind: Secret

metadata:

name: keda-rabbitmq-secret

stringData:

host: amqp://user:password@rabbitmq:5672

---

apiVersion: keda.sh/v1alpha1

kind: TriggerAuthentication

metadata:

name: keda-trigger-auth-rabbitmq-conn

namespace: default

spec:

secretTargetRef:

- parameter: host

name: keda-rabbitmq-secret

key: host

#创建资源

[root@k8s-master01 ~]# kaf rabbitmq-ta.yaml

#查看资源

[root@k8s-master01 ~]# kg ta

NAME PODIDENTITY SECRET ENV VAULTADDRESS

keda-trigger-auth-rabbitmq-conn keda-rabbitmq-secret

2、创建ScaledObject

#定义资源文件

[root@k8s-master01 ~]# vim rabbitmq-so.yaml

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: rabbitmq-scaledobject

namespace: default

spec:

scaleTargetRef:

name: rabbitmq-consumer

pollingInterval: 5

cooldownPeriod: 30

minReplicaCount: 1

maxReplicaCount: 30

triggers:

- type: rabbitmq

metadata:

protocol: amqp

queueName: hello

mode: QueueLength

value: "50"

authenticationRef:

name: keda-trigger-auth-rabbitmq-conn

#创建资源

[root@k8s-master01 ~]# kaf rabbitmq-so.yaml

#查看so

[root@k8s-master01 ~]# kg so | grep rabbitmq

rabbitmq-scaledobject apps/v1.Deployment rabbitmq-consumer 1 30 False Unknown False Unknown 27m

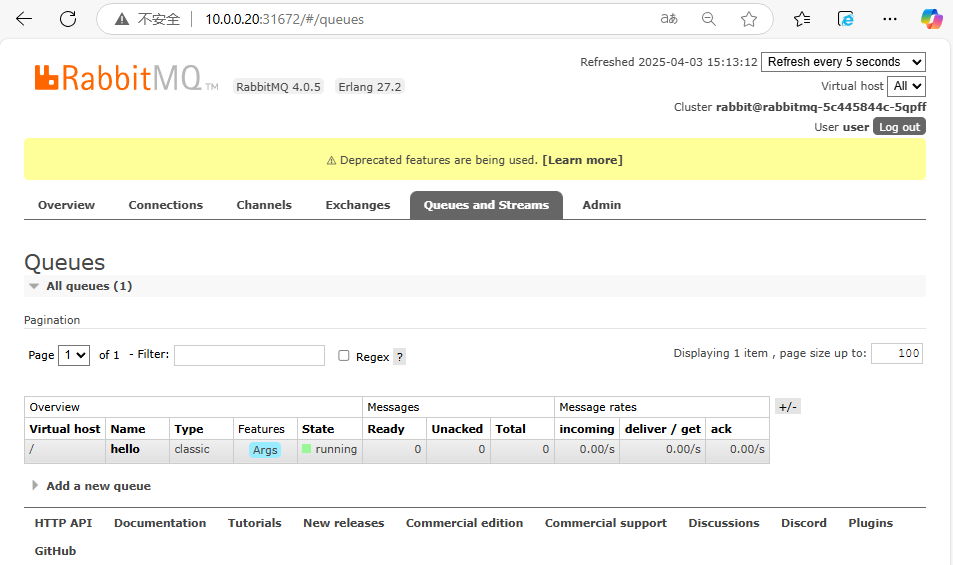

说明:上面queueName需要根据自己环境来决定

3、重新定义Job中消息数量为300

#重新定义消息数量,由原来的10个变为300个

[root@k8s-master01 ~]# vim rabbitmq-publish-job.yaml

apiVersion: batch/v1

kind: Job

metadata:

name: rabbitmq-publish

spec:

backoffLimit: 4

template:

spec:

containers:

- name: rabbitmq-client

command:

- "send"

- "amqp://user:password@rabbitmq.default.svc.cluster.local:5672"

- "300"

image: registry.cn-hangzhou.aliyuncs.com/github_images1024/rabbitmq-publish:v1.0

imagePullPolicy: IfNotPresent

restartPolicy: Never

#重新创建job

[root@k8s-master01 ~]# k delete -f rabbitmq-publish-job.yaml ; kaf rabbitmq-publish-job.yaml

4、重新查看资源,观察到因为消息队列设置是300个,同时设置扩容消息队列是50个,导致pod会正常扩容到6个,

[root@k8s-master01 ~]# kgp

2.6.2.3 环境清理¶

k delete -f rabbitmq-deploy.yaml -f rabbitmq-svc.yaml -f rabbitmq-publish-job.yaml -f rabbitmq-consumer-deploy.yaml -f rabbitmq-ta.yaml -f rabbitmq-so.yaml

2.3 基于MySQL数据变化的弹性伸缩¶

1.1 创建测试资源¶

1、创建一个MySQL实例

#创建mysql资源

[root@k8s-master01 ~]# k create deploy mysql --image=registry.cn-hangzhou.aliyuncs.com/abroad_images/mysql:8.0.20

#设置Mysql环境变量

[root@k8s-master01 ~]# k set env deploy mysql MYSQL_ROOT_PASSWORD=password

#暴露服务

[root@k8s-master01 ~]# k expose deploy mysql --port 3306

#查看创建的资源信息

[root@k8s-master01 ~]# kgp | grep mysql

mysql-669d986df7-vvncb 1/1 Running 0 3m53s

[root@k8s-master01 ~]# kg svc | grep mysql

mysql ClusterIP 10.109.131.136 <none> 3306/TCP 3m20s

2、创建用于测试的库和表

[root@k8s-master01 ~]# k exec -it mysql-669d986df7-vvncb -- bash

root@mysql-669d986df7-vvncb:/# mysql -uroot -hmysql -p

Enter password: password

mysql> create database dukuan;

mysql> use dukuan;

mysql>

CREATE TABLE orders (

id INT AUTO_INCREMENT PRIMARY KEY,

customer_name VARCHAR(100),

order_amount DECIMAL(10,2),

status ENUM('pending', 'processed') DEFAULT 'pending',

created_at TIMESTAMP DEFAULT CURRENT_TIMESTAMP

);

3、模拟写入数据的程序

#创建资源

[root@k8s-master01 ~]# k create job insert-orders-job --image=registry.cn-hangzhou.aliyuncs.com/abroad_images/mysql:insert

#查看资源

[root@k8s-master01 ~]# kgp | grep insert-orders-job

insert-orders-job-t9fps 0/1 Completed 0 29s

#重新登录数据库中查看插入的数据,,观察到status这一列除了processed还有pending

[root@k8s-master01 ~]# k exec -it mysql-669d986df7-vvncb -- bash

root@mysql-669d986df7-vvncb:/# mysql -uroot -hmysql -p

Enter password: password

mysql> select * from dukuan.orders;

+-----+---------------+--------------+-----------+---------------------+

| id | customer_name | order_amount | status | created_at |

+-----+---------------+--------------+-----------+---------------------+

| 1 | Frank | 444.00 | processed | 2025-04-03 08:17:41 |

| 2 | Eve | 444.00 | pending | 2025-04-03 08:17:41 |

| 3 | Bob | 444.01 | processed | 2025-04-03 08:17:41 |

| 4 | Frank | 444.00 | pending | 2025-04-03 08:17:41 |

| 5 | Eve | 444.01 | processed | 2025-04-03 08:17:41 |

| 6 | Frank | 444.01 | processed | 2025-04-03 08:17:41 |

| 7 | Bob | 444.00 | pending | 2025-04-03 08:17:41 |

| 8 | Bob | 444.01 | pending | 2025-04-03 08:17:42 |

| 9 | Alice | 575.00 | processed | 2025-04-03 08:17:42 |

| 10 | Grace | 575.01 | pending | 2025-04-03 08:17:42 |

| 11 | David | 575.01 | processed | 2025-04-03 08:17:42 |

| 12 | Frank | 575.01 | pending | 2025-04-03 08:17:42 |

| 13 | Judy | 575.01 | processed | 2025-04-03 08:17:42 |

| 14 | Ivan | 575.00 | processed | 2025-04-03 08:17:42 |

| 15 | Alice | 575.00 | pending | 2025-04-03 08:17:42 |

| 16 | Bob | 575.00 | pending | 2025-04-03 08:17:42 |

| 17 | Eve | 575.01 | pending | 2025-04-03 08:17:42 |

| 18 | Alice | 575.01 | processed | 2025-04-03 08:17:42 |

| 19 | Grace | 575.01 | pending | 2025-04-03 08:17:42 |

| 20 | Heidi | 575.00 | processed | 2025-04-03 08:17:42 |

| 21 | Alice | 575.00 | pending | 2025-04-03 08:17:42 |

| 22 | Heidi | 575.00 | processed | 2025-04-03 08:17:42 |

| 23 | Grace | 575.00 | pending | 2025-04-03 08:17:42 |

| 24 | Alice | 575.00 | pending | 2025-04-03 08:17:42 |

| 25 | Bob | 575.01 | pending | 2025-04-03 08:17:42 |

| 26 | Ivan | 575.00 | processed | 2025-04-03 08:17:42 |

| 27 | Frank | 575.00 | processed | 2025-04-03 08:17:42 |

| 28 | Frank | 575.00 | processed | 2025-04-03 08:17:42 |

| 29 | Judy | 575.01 | pending | 2025-04-03 08:17:42 |

| 30 | Heidi | 575.00 | processed | 2025-04-03 08:17:42 |

| 31 | Grace | 575.00 | processed | 2025-04-03 08:17:42 |

| 32 | David | 575.01 | pending | 2025-04-03 08:17:42 |

| 33 | Judy | 575.00 | pending | 2025-04-03 08:17:42 |

| 34 | Judy | 575.00 | processed | 2025-04-03 08:17:42 |

| 35 | David | 575.01 | processed | 2025-04-03 08:17:42 |

| 36 | Bob | 575.01 | pending | 2025-04-03 08:17:42 |

| 37 | Charlie | 575.00 | pending | 2025-04-03 08:17:42 |

| 38 | Frank | 575.00 | pending | 2025-04-03 08:17:42 |

| 39 | Grace | 575.00 | pending | 2025-04-03 08:17:42 |

| 40 | Eve | 575.01 | pending | 2025-04-03 08:17:42 |

| 41 | David | 575.00 | pending | 2025-04-03 08:17:42 |

| 42 | Charlie | 575.01 | processed | 2025-04-03 08:17:42 |

| 43 | Heidi | 575.00 | processed | 2025-04-03 08:17:42 |

| 44 | Grace | 575.00 | pending | 2025-04-03 08:17:42 |

| 45 | Alice | 575.00 | processed | 2025-04-03 08:17:42 |

| 46 | Frank | 575.01 | pending | 2025-04-03 08:17:42 |

| 47 | Ivan | 575.00 | processed | 2025-04-03 08:17:42 |

| 48 | Eve | 575.00 | pending | 2025-04-03 08:17:42 |

| 49 | Eve | 575.01 | processed | 2025-04-03 08:17:42 |

| 50 | Frank | 575.01 | processed | 2025-04-03 08:17:42 |

| 51 | Bob | 575.01 | pending | 2025-04-03 08:17:42 |

| 52 | Heidi | 575.01 | processed | 2025-04-03 08:17:42 |

| 53 | Eve | 575.01 | processed | 2025-04-03 08:17:42 |

| 54 | David | 575.01 | processed | 2025-04-03 08:17:42 |

| 55 | Grace | 575.01 | pending | 2025-04-03 08:17:42 |

| 56 | Judy | 575.01 | processed | 2025-04-03 08:17:42 |

| 57 | Eve | 575.00 | pending | 2025-04-03 08:17:42 |

| 58 | David | 575.00 | pending | 2025-04-03 08:17:42 |

| 59 | Bob | 575.01 | processed | 2025-04-03 08:17:42 |

| 60 | Judy | 575.01 | pending | 2025-04-03 08:17:42 |

| 61 | Alice | 575.00 | processed | 2025-04-03 08:17:42 |

| 62 | Eve | 575.00 | pending | 2025-04-03 08:17:42 |

| 63 | David | 575.01 | pending | 2025-04-03 08:17:42 |

| 64 | Heidi | 575.00 | processed | 2025-04-03 08:17:42 |

| 65 | Charlie | 575.01 | processed | 2025-04-03 08:17:42 |

| 66 | Judy | 575.00 | processed | 2025-04-03 08:17:42 |

| 67 | Charlie | 575.00 | pending | 2025-04-03 08:17:42 |

| 68 | Judy | 575.01 | pending | 2025-04-03 08:17:42 |

| 69 | Bob | 575.00 | pending | 2025-04-03 08:17:42 |

| 70 | Bob | 575.00 | pending | 2025-04-03 08:17:42 |

| 71 | Charlie | 575.00 | processed | 2025-04-03 08:17:42 |

| 72 | Ivan | 575.00 | processed | 2025-04-03 08:17:42 |

| 73 | Alice | 575.00 | processed | 2025-04-03 08:17:42 |

| 74 | Charlie | 575.00 | processed | 2025-04-03 08:17:42 |

| 75 | Bob | 575.00 | pending | 2025-04-03 08:17:42 |

| 76 | Charlie | 575.00 | pending | 2025-04-03 08:17:42 |

| 77 | Heidi | 575.01 | processed | 2025-04-03 08:17:42 |

| 78 | Eve | 575.01 | pending | 2025-04-03 08:17:42 |

| 79 | Charlie | 575.00 | pending | 2025-04-03 08:17:42 |

| 80 | Ivan | 575.00 | processed | 2025-04-03 08:17:42 |

| 81 | Frank | 575.01 | processed | 2025-04-03 08:17:42 |

| 82 | Heidi | 575.00 | processed | 2025-04-03 08:17:42 |

| 83 | Frank | 575.00 | processed | 2025-04-03 08:17:42 |

| 84 | David | 575.00 | pending | 2025-04-03 08:17:42 |

| 85 | David | 575.00 | pending | 2025-04-03 08:17:42 |

| 86 | Heidi | 575.00 | processed | 2025-04-03 08:17:42 |

| 87 | Alice | 575.00 | pending | 2025-04-03 08:17:42 |

| 88 | Frank | 575.00 | processed | 2025-04-03 08:17:42 |

| 89 | Judy | 575.00 | pending | 2025-04-03 08:17:42 |

| 90 | Eve | 575.01 | pending | 2025-04-03 08:17:42 |

| 91 | Heidi | 575.01 | pending | 2025-04-03 08:17:42 |

| 92 | Eve | 575.01 | processed | 2025-04-03 08:17:42 |

| 93 | Ivan | 575.01 | processed | 2025-04-03 08:17:42 |

| 94 | Alice | 575.01 | processed | 2025-04-03 08:17:42 |

| 95 | Ivan | 575.00 | processed | 2025-04-03 08:17:42 |

| 96 | Bob | 575.00 | processed | 2025-04-03 08:17:42 |

| 97 | Ivan | 575.00 | pending | 2025-04-03 08:17:42 |

| 98 | Bob | 575.00 | pending | 2025-04-03 08:17:42 |

| 99 | Grace | 575.00 | pending | 2025-04-03 08:17:42 |

| 100 | Ivan | 575.01 | pending | 2025-04-03 08:17:42 |

+-----+---------------+--------------+-----------+---------------------+

100 rows in set (0.00 sec)

4、创建处理程序

#创建资源

[root@k8s-master01 ~]# k create deploy update-orders --image=registry.cn-hangzhou.aliyuncs.com/abroad_images/mysql:process

#查看资源

[root@k8s-master01 ~]# kgp | grep update-orders

update-orders-bf567b97c-66d27 1/1 Running 0 48s

#进入数据库再次查看数据,观察到status这一列都变为processed

[root@k8s-master01 ~]# k exec -it mysql-669d986df7-vvncb -- bash

root@mysql-669d986df7-vvncb:/# mysql -uroot -hmysql -p

Enter password: password

mysql> select * from dukuan.orders;

+-----+---------------+--------------+-----------+---------------------+

| id | customer_name | order_amount | status | created_at |

+-----+---------------+--------------+-----------+---------------------+

| 1 | Frank | 444.00 | processed | 2025-04-03 08:17:41 |

| 2 | Eve | 444.00 | processed | 2025-04-03 08:17:41 |

| 3 | Bob | 444.01 | processed | 2025-04-03 08:17:41 |

| 4 | Frank | 444.00 | processed | 2025-04-03 08:17:41 |

| 5 | Eve | 444.01 | processed | 2025-04-03 08:17:41 |

| 6 | Frank | 444.01 | processed | 2025-04-03 08:17:41 |

| 7 | Bob | 444.00 | processed | 2025-04-03 08:17:41 |

| 8 | Bob | 444.01 | processed | 2025-04-03 08:17:42 |

| 9 | Alice | 575.00 | processed | 2025-04-03 08:17:42 |

| 10 | Grace | 575.01 | processed | 2025-04-03 08:17:42 |

| 11 | David | 575.01 | processed | 2025-04-03 08:17:42 |

| 12 | Frank | 575.01 | processed | 2025-04-03 08:17:42 |

| 13 | Judy | 575.01 | processed | 2025-04-03 08:17:42 |

| 14 | Ivan | 575.00 | processed | 2025-04-03 08:17:42 |

| 15 | Alice | 575.00 | processed | 2025-04-03 08:17:42 |

| 16 | Bob | 575.00 | processed | 2025-04-03 08:17:42 |

| 17 | Eve | 575.01 | processed | 2025-04-03 08:17:42 |

| 18 | Alice | 575.01 | processed | 2025-04-03 08:17:42 |

| 19 | Grace | 575.01 | processed | 2025-04-03 08:17:42 |

| 20 | Heidi | 575.00 | processed | 2025-04-03 08:17:42 |

| 21 | Alice | 575.00 | processed | 2025-04-03 08:17:42 |

| 22 | Heidi | 575.00 | processed | 2025-04-03 08:17:42 |

| 23 | Grace | 575.00 | processed | 2025-04-03 08:17:42 |

| 24 | Alice | 575.00 | processed | 2025-04-03 08:17:42 |

| 25 | Bob | 575.01 | processed | 2025-04-03 08:17:42 |

| 26 | Ivan | 575.00 | processed | 2025-04-03 08:17:42 |

| 27 | Frank | 575.00 | processed | 2025-04-03 08:17:42 |

| 28 | Frank | 575.00 | processed | 2025-04-03 08:17:42 |

| 29 | Judy | 575.01 | processed | 2025-04-03 08:17:42 |

| 30 | Heidi | 575.00 | processed | 2025-04-03 08:17:42 |

| 31 | Grace | 575.00 | processed | 2025-04-03 08:17:42 |

| 32 | David | 575.01 | processed | 2025-04-03 08:17:42 |

| 33 | Judy | 575.00 | processed | 2025-04-03 08:17:42 |

| 34 | Judy | 575.00 | processed | 2025-04-03 08:17:42 |

| 35 | David | 575.01 | processed | 2025-04-03 08:17:42 |

| 36 | Bob | 575.01 | processed | 2025-04-03 08:17:42 |

| 37 | Charlie | 575.00 | processed | 2025-04-03 08:17:42 |

| 38 | Frank | 575.00 | processed | 2025-04-03 08:17:42 |

| 39 | Grace | 575.00 | processed | 2025-04-03 08:17:42 |

| 40 | Eve | 575.01 | processed | 2025-04-03 08:17:42 |

| 41 | David | 575.00 | processed | 2025-04-03 08:17:42 |

| 42 | Charlie | 575.01 | processed | 2025-04-03 08:17:42 |

| 43 | Heidi | 575.00 | processed | 2025-04-03 08:17:42 |

| 44 | Grace | 575.00 | processed | 2025-04-03 08:17:42 |

| 45 | Alice | 575.00 | processed | 2025-04-03 08:17:42 |

| 46 | Frank | 575.01 | processed | 2025-04-03 08:17:42 |

| 47 | Ivan | 575.00 | processed | 2025-04-03 08:17:42 |

| 48 | Eve | 575.00 | processed | 2025-04-03 08:17:42 |

| 49 | Eve | 575.01 | processed | 2025-04-03 08:17:42 |

| 50 | Frank | 575.01 | processed | 2025-04-03 08:17:42 |

| 51 | Bob | 575.01 | processed | 2025-04-03 08:17:42 |

| 52 | Heidi | 575.01 | processed | 2025-04-03 08:17:42 |

| 53 | Eve | 575.01 | processed | 2025-04-03 08:17:42 |

| 54 | David | 575.01 | processed | 2025-04-03 08:17:42 |

| 55 | Grace | 575.01 | processed | 2025-04-03 08:17:42 |

| 56 | Judy | 575.01 | processed | 2025-04-03 08:17:42 |

| 57 | Eve | 575.00 | processed | 2025-04-03 08:17:42 |

| 58 | David | 575.00 | processed | 2025-04-03 08:17:42 |

| 59 | Bob | 575.01 | processed | 2025-04-03 08:17:42 |

| 60 | Judy | 575.01 | processed | 2025-04-03 08:17:42 |

| 61 | Alice | 575.00 | processed | 2025-04-03 08:17:42 |

| 62 | Eve | 575.00 | processed | 2025-04-03 08:17:42 |

| 63 | David | 575.01 | processed | 2025-04-03 08:17:42 |

| 64 | Heidi | 575.00 | processed | 2025-04-03 08:17:42 |

| 65 | Charlie | 575.01 | processed | 2025-04-03 08:17:42 |

| 66 | Judy | 575.00 | processed | 2025-04-03 08:17:42 |

| 67 | Charlie | 575.00 | processed | 2025-04-03 08:17:42 |

| 68 | Judy | 575.01 | processed | 2025-04-03 08:17:42 |

| 69 | Bob | 575.00 | processed | 2025-04-03 08:17:42 |

| 70 | Bob | 575.00 | processed | 2025-04-03 08:17:42 |

| 71 | Charlie | 575.00 | processed | 2025-04-03 08:17:42 |

| 72 | Ivan | 575.00 | processed | 2025-04-03 08:17:42 |

| 73 | Alice | 575.00 | processed | 2025-04-03 08:17:42 |

| 74 | Charlie | 575.00 | processed | 2025-04-03 08:17:42 |

| 75 | Bob | 575.00 | processed | 2025-04-03 08:17:42 |

| 76 | Charlie | 575.00 | processed | 2025-04-03 08:17:42 |

| 77 | Heidi | 575.01 | processed | 2025-04-03 08:17:42 |

| 78 | Eve | 575.01 | processed | 2025-04-03 08:17:42 |

| 79 | Charlie | 575.00 | processed | 2025-04-03 08:17:42 |

| 80 | Ivan | 575.00 | processed | 2025-04-03 08:17:42 |

| 81 | Frank | 575.01 | processed | 2025-04-03 08:17:42 |

| 82 | Heidi | 575.00 | processed | 2025-04-03 08:17:42 |

| 83 | Frank | 575.00 | processed | 2025-04-03 08:17:42 |

| 84 | David | 575.00 | processed | 2025-04-03 08:17:42 |

| 85 | David | 575.00 | processed | 2025-04-03 08:17:42 |

| 86 | Heidi | 575.00 | processed | 2025-04-03 08:17:42 |

| 87 | Alice | 575.00 | processed | 2025-04-03 08:17:42 |

| 88 | Frank | 575.00 | processed | 2025-04-03 08:17:42 |

| 89 | Judy | 575.00 | processed | 2025-04-03 08:17:42 |

| 90 | Eve | 575.01 | processed | 2025-04-03 08:17:42 |

| 91 | Heidi | 575.01 | processed | 2025-04-03 08:17:42 |

| 92 | Eve | 575.01 | processed | 2025-04-03 08:17:42 |

| 93 | Ivan | 575.01 | processed | 2025-04-03 08:17:42 |

| 94 | Alice | 575.01 | processed | 2025-04-03 08:17:42 |

| 95 | Ivan | 575.00 | processed | 2025-04-03 08:17:42 |

| 96 | Bob | 575.00 | processed | 2025-04-03 08:17:42 |

| 97 | Ivan | 575.00 | processed | 2025-04-03 08:17:42 |

| 98 | Bob | 575.00 | processed | 2025-04-03 08:17:42 |

| 99 | Grace | 575.00 | processed | 2025-04-03 08:17:42 |

| 100 | Ivan | 575.01 | processed | 2025-04-03 08:17:42 |

+-----+---------------+--------------+-----------+---------------------+

100 rows in set (0.00 sec)

#调整处理程序的副本数为0

[root@k8s-master01 ~]# k scale deploy update-orders --replicas=0

#验证查看

[root@k8s-master01 ~]# kg deploy update-orders

NAME READY UP-TO-DATE AVAILABLE AGE

update-orders 0/0 0 0 6m2s

2.6.3.2 开始实战¶

1、创建TriggerAuthentication和Secret

#定义资源文件

[root@k8s-master01 ~]# vim mysql-ta.yaml

apiVersion: v1

kind: Secret

metadata:

name: keda-mysql-secret

stringData:

mysql_conn_str: root:password@tcp(mysql:3306)/dukuan

---

apiVersion: keda.sh/v1alpha1

kind: TriggerAuthentication

metadata:

name: keda-trigger-auth-mysql-conn

spec:

secretTargetRef:

- parameter: connectionString

name: keda-mysql-secret

key: mysql_conn_str

#创建资源

[root@k8s-master01 ~]# kaf mysql-ta.yaml

#查看资源

[root@k8s-master01 ~]# kg -f mysql-ta.yaml

NAME TYPE DATA AGE

secret/keda-mysql-secret Opaque 1 11s

NAME PODIDENTITY SECRET ENV VAULTADDRESS

triggerauthentication.keda.sh/keda-trigger-auth-mysql-conn keda-mysql-secret

2、创建ScaledObject

#定义资源文件

[root@k8s-master01 ~]# vim mysql-so.yaml

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: mysql-scaledobject

spec:

scaleTargetRef:

name: update-orders

pollingInterval: 5

cooldownPeriod: 30

minReplicaCount: 0

maxReplicaCount: 5

triggers:

- type: mysql

metadata:

queryValue: "4.4"

query: "SELECT COUNT(*) FROM orders WHERE status='pending'"

authenticationRef:

name: keda-trigger-auth-mysql-conn

#创建资源

[root@k8s-master01 ~]# kaf mysql-so.yaml

#查看so

[root@k8s-master01 ~]# kg so | grep mysql

mysql-scaledobject apps/v1.Deployment update-orders 0 5 False Unknown False Unknown 4m46s

3、重新插入数据

[root@k8s-master01 ~]# k create job insert-orders-job2 --image=registry.cn-hangzhou.aliyuncs.com/abroad_images/mysql:insert

4、等待30s后查看pod,观察到pod已经被扩容

[root@k8s-master01 ~]# kgp

2.6.3.3 环境清理¶

k delete deploy mysql update-orders

k delete svc mysql

k delete job insert-orders-job insert-orders-job2

k delete -f mysql-ta.yaml -f mysql-so.yaml

2.4 使用ScaledJob处理任务与环境清理¶

KEDA可以使用ScaledJob实现单次或者临时的任务处理,用来处理一些数据,比如图片、视频等。

假设有一个需求,需要从Redis队列获取数据,然后进行处理,就可以使用ScaledJob实现。

1.1 创建测试资源¶

创建一个Redis实例

#添加helm的repo源

[root@k8s-master01 ~]# helm repo add bitnami https://charts.bitnami.com/bitnami

#验证是否添加成功

[root@k8s-master01 ~]# helm repo list | grep bitnami

bitnami https://charts.bitnami.com/bitnami

#下载redis实例

[root@k8s-master01 ~]# helm upgrade --install redis bitnami/redis --version 20.1.6 --set global.imageRegistry=docker.kubeasy.com --set architecture=standalone --set persistence.enabled=false --set master.persistence.enabled=false --set auth.password=dukuan

#验证redis实例

[root@k8s-master01 ~]# kgp | grep redis

redis-master-0 1/1 Running 0 3m8s

2.6.4.2 开始实战¶

1、创建TriggerAuthentication和Secret

#定义资源文件

[root@k8s-master01 ~]# vim redis-ta.yaml

apiVersion: v1

kind: Secret

metadata:

name: keda-redis-secret

stringData:

redis_username: ""

redis_password: "dukuan"

---

apiVersion: keda.sh/v1alpha1

kind: TriggerAuthentication

metadata:

name: keda-trigger-auth-redis-conn

spec:

secretTargetRef:

- parameter: username

name: keda-redis-secret

key: redis_username

- parameter: password

name: keda-redis-secret

key: redis_password

#创建资源

[root@k8s-master01 ~]# kaf redis-ta.yaml

#查看资源

[root@k8s-master01 ~]# kg -f redis-ta.yaml

NAME TYPE DATA AGE

secret/keda-redis-secret Opaque 2 43s

NAME PODIDENTITY SECRET ENV VAULTADDRESS

triggerauthentication.keda.sh/keda-trigger-auth-redis-conn keda-redis-secret

2、写入测试数据

#写入测试数据

[root@k8s-master01 ~]# k exec -it redis-master-0 -- bash

I have no name!@redis-master-0:/$ redis-cli -h redis-master -a dukuan

redis-master:6379> LPUSH test_list "t1" "t2" "t3"

(integer) 3

#查看全部数据

redis-master:6379> LRANGE test_list 0 -1

1) "t3"

2) "t2"

3) "t1"

#读取数据

redis-master:6379> RPOP test_list

"t1"

redis-master:6379> LPOP test_list

"t3"

2、创建ScaledJob监听数据

#定义资源文件

[root@k8s-master01 ~]# vim redis-sj.yaml

apiVersion: keda.sh/v1alpha1

kind: ScaledJob

metadata:

name: redis-queue-scaledjob

spec:

jobTargetRef:

parallelism: 1

completions: 1

backoffLimit: 4

template:

spec:

containers:

- name: redis-queue-consumer

image: registry.cn-hangzhou.aliyuncs.com/abroad_images/redis:process

pollingInterval: 30

successfulJobsHistoryLimit: 3

failedJobsHistoryLimit: 3

maxReplicaCount: 5

successfulJobsHistoryLimit: 3

failedJobsHistoryLimit: 3

triggers:

- type: redis

metadata:

address: redis-master.default.svc.cluster.local:6379

listName: test_list

listLength: '5'

authenticationRef:

name: keda-trigger-auth-redis-conn

#创建资源

[root@k8s-master01 ~]# kaf redis-sj.yaml

#查看sj

[root@k8s-master01 ~]# kg sj | grep redis

redis-queue-scaledjob 5 True False Unknown 4m59s

3、写入测试数据

[root@k8s-master01 ~]# k exec -it redis-master-0 -- bash

I have no name!@redis-master-0:/$ redis-cli -h redis-master -a dukuan

redis-master:6379> LPUSH test_list "t1" "t2" "t3"

redis-master:6379> LPUSH test_list "t1" "t2" "t3"

redis-master:6379> LPUSH test_list "t1" "t2" "t3"

redis-master:6379> LPUSH test_list "t1" "t2" "t3"

redis-master:6379> LPUSH test_list "t1" "t2" "t3"

redis-master:6379> LPUSH test_list "t1" "t2" "t3"

redis-master:6379> LPUSH test_list "t1" "t2" "t3"

4、查看ScaledJob任务,观察到已经开始扩容

[root@k8s-master01 ~]# kgp | grep redis-queue-scaledjob

redis-queue-scaledjob-ltmds-5st29 0/1 Completed 0 102s

redis-queue-scaledjob-lz5jn-mfpbq 0/1 Completed 0 102s

redis-queue-scaledjob-mj57n-lffsj 0/1 Completed 0 102s

2.6.4.3 环境清理¶

#卸载redis

[root@k8s-master01 ~]# helm uninstall redis

#删除

[root@k8s-master01 ~]# kubectl delete -f redis-ta.yaml -f redis-sj.yaml

添加helm源¶

[root@k8s-master01 ~]# helm repo add kedacore https://kedacore.github.io/charts

更新helm源¶

[root@k8s-master01 ~]# helm repo update Hang tight while we grab the latest from your chart repositories... ...Successfully got an update from the "ot-helm" chart repository ...Successfully got an update from the "kedacore" chart repository Update Complete. ⎈Happy Helming!⎈

2、安装KEDA

```shell

[root@k8s-master01 ~]# helm install keda kedacore/keda --namespace keda --create-namespace

...

...

Learn more about KEDA:

- Documentation: https://keda.sh/

- Support: https://keda.sh/support/

- File an issue: https://github.com/kedacore/keda/issues/new/choose

3、查看服务状态

[root@k8s-master01 ~]# kgp -n keda

2.5.1.2 Helm离线安装¶

1、添加KEDA的Helm源

#添加helm源

[root@k8s-master01 ~]# helm repo add kedacore https://kedacore.github.io/charts

#更新helm源

[root@k8s-master01 ~]# helm repo update

Hang tight while we grab the latest from your chart repositories...

...Successfully got an update from the "ot-helm" chart repository

...Successfully got an update from the "kedacore" chart repository

Update Complete. ⎈Happy Helming!⎈

2、下载并解压keda包

#下载keda包

[root@k8s-master01 ~]# helm pull kedacore/keda --version 2.16.1

#解压keda包

[root@k8s-master01 ~]# tar xf keda-2.16.1.tgz

3、创建ns

[root@k8s-master01 ~]# kubectl create ns keda

4、修改values.yaml文件

[root@k8s-master01 ~]# vim /root/keda/values.yaml

...

...

#修改第13行内容

13 registry: registry.cn-hangzhou.aliyuncs.com

#修改第15行内容

15 repository: github_images1024/keda

#修改第17行内容

17 tag: "2.16.1"

#修改第20行内容

20 registry: registry.cn-hangzhou.aliyuncs.com

#修改第22行内容

22 repository: github_images1024/keda-metrics-apiserver

#修改第24行内容

24 tag: "2.16.1"

#修改第27行内容

27 registry: registry.cn-hangzhou.aliyuncs.com

#修改第29行内容

29 repository: github_images1024/keda-admission-webhooks

#修改第31行内容

31 tag: "2.16.1"

完整values.yaml文件

[root@k8s-master01 ~]# egrep -v "^#|^$|#" /root/keda/values.yaml

global:

image:

registry: null

image:

keda:

registry: registry.cn-hangzhou.aliyuncs.com

repository: github_images1024/keda

tag: "2.16.1"

metricsApiServer:

registry: registry.cn-hangzhou.aliyuncs.com

repository: github_images1024/keda-metrics-apiserver

tag: "2.16.1"

webhooks:

registry: registry.cn-hangzhou.aliyuncs.com

repository: github_images1024/keda-admission-webhooks

tag: "2.16.1"

pullPolicy: Always

clusterName: kubernetes-default

clusterDomain: cluster.local

crds:

install: true

additionalAnnotations:

{}

watchNamespace: ""

imagePullSecrets: []

networkPolicy:

enabled: false

flavor: "cilium"

cilium:

operator:

extraEgressRules: []

operator:

name: keda-operator

revisionHistoryLimit: 10

replicaCount: 1

disableCompression: true

affinity: {}

extraContainers: []

extraInitContainers: []

livenessProbe:

initialDelaySeconds: 25

periodSeconds: 10

timeoutSeconds: 1

failureThreshold: 3

successThreshold: 1

readinessProbe:

initialDelaySeconds: 20

periodSeconds: 3

timeoutSeconds: 1

failureThreshold: 3

successThreshold: 1

metricsServer:

revisionHistoryLimit: 10

replicaCount: 1

disableCompression: true

dnsPolicy: ClusterFirst

useHostNetwork: false

affinity: {}

livenessProbe:

initialDelaySeconds: 5

periodSeconds: 10

timeoutSeconds: 1

failureThreshold: 3

successThreshold: 1

readinessProbe:

initialDelaySeconds: 5

periodSeconds: 3

timeoutSeconds: 1

failureThreshold: 3

successThreshold: 1

webhooks:

enabled: true

port: ""

healthProbePort: 8081

livenessProbe:

initialDelaySeconds: 25

periodSeconds: 10

timeoutSeconds: 1

failureThreshold: 3

successThreshold: 1

readinessProbe:

initialDelaySeconds: 20

periodSeconds: 3

timeoutSeconds: 1

failureThreshold: 3

successThreshold: 1

useHostNetwork: false

name: keda-admission-webhooks

revisionHistoryLimit: 10

replicaCount: 1

affinity: {}

failurePolicy: Ignore

upgradeStrategy:

operator: {}

metricsApiServer: {}

webhooks: {}

podDisruptionBudget:

operator: {}

metricServer: {}

webhooks: {}

additionalLabels:

{}

additionalAnnotations:

{}

podAnnotations:

keda: {}

metricsAdapter: {}

webhooks: {}

podLabels:

keda: {}

metricsAdapter: {}

webhooks: {}

rbac:

create: true

aggregateToDefaultRoles: false

enabledCustomScaledRefKinds: true

controlPlaneServiceAccountsNamespace: kube-system

scaledRefKinds:

- apiGroup: "*"

kind: "*"

serviceAccount:

operator:

create: true

name: keda-operator

automountServiceAccountToken: true

annotations: {}

metricServer:

create: true

name: keda-metrics-server

automountServiceAccountToken: true

annotations: {}

webhooks:

create: true

name: keda-webhook

automountServiceAccountToken: true

annotations: {}

podIdentity:

azureWorkload:

enabled: false

clientId: ""

tenantId: ""

tokenExpiration: 3600

aws:

irsa:

enabled: false

audience: "sts.amazonaws.com"

roleArn: ""

stsRegionalEndpoints: "true"

tokenExpiration: 86400

gcp:

enabled: false

gcpIAMServiceAccount: ""

grpcTLSCertsSecret: ""

hashiCorpVaultTLS: ""

logging:

operator:

level: info

format: console

timeEncoding: rfc3339

stackTracesEnabled: false

metricServer:

level: 0

stderrthreshold: ERROR

webhooks:

level: info

format: console

timeEncoding: rfc3339

securityContext:

operator:

capabilities:

drop:

- ALL

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

seccompProfile:

type: RuntimeDefault

metricServer:

capabilities:

drop:

- ALL

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

seccompProfile:

type: RuntimeDefault

webhooks:

capabilities:

drop:

- ALL

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

seccompProfile:

type: RuntimeDefault

podSecurityContext:

operator:

runAsNonRoot: true

metricServer:

runAsNonRoot: true

webhooks:

runAsNonRoot: true

service:

type: ClusterIP

portHttps: 443

portHttpsTarget: 6443

annotations: {}

resources:

operator:

limits:

cpu: 1

memory: 1000Mi

requests:

cpu: 100m

memory: 100Mi

metricServer:

limits:

cpu: 1

memory: 1000Mi

requests:

cpu: 100m

memory: 100Mi

webhooks:

limits:

cpu: 1

memory: 1000Mi

requests:

cpu: 100m

memory: 100Mi

nodeSelector: {}

tolerations: []

topologySpreadConstraints:

operator: []

metricsServer: []

webhooks: []

affinity: {}

priorityClassName: ""

http:

timeout: 3000

keepAlive:

enabled: true

minTlsVersion: TLS12

profiling:

operator:

enabled: false

port: 8082

metricsServer:

enabled: false

port: 8083

webhooks:

enabled: false

port: 8084

extraArgs:

keda: {}

metricsAdapter: {}

env: []

volumes:

keda:

extraVolumes: []

extraVolumeMounts: []

metricsApiServer:

extraVolumes: []

extraVolumeMounts: []

webhooks:

extraVolumes: []

extraVolumeMounts: []

prometheus:

metricServer:

enabled: false

port: 8080

portName: metrics

serviceMonitor:

enabled: false

jobLabel: ""

targetLabels: []

podTargetLabels: []

port: metrics

targetPort: ""

interval: ""

scrapeTimeout: ""

relabellings: []

relabelings: []

metricRelabelings: []

additionalLabels: {}

scheme: http

tlsConfig: {}

podMonitor:

enabled: false

interval: ""

scrapeTimeout: ""

namespace: ""

additionalLabels: {}

relabelings: []

metricRelabelings: []

operator:

enabled: false

port: 8080

serviceMonitor:

enabled: false

jobLabel: ""

targetLabels: []

podTargetLabels: []

port: metrics

targetPort: ""

interval: ""

scrapeTimeout: ""

relabellings: []

relabelings: []

metricRelabelings: []

additionalLabels: {}

scheme: http

tlsConfig: {}

podMonitor:

enabled: false

interval: ""

scrapeTimeout: ""

namespace: ""

additionalLabels: {}

relabelings: []

metricRelabelings: []

prometheusRules:

enabled: false

namespace: ""

additionalLabels: {}

alerts:

[]

webhooks:

enabled: false

port: 8080

serviceMonitor:

enabled: false

jobLabel: ""

targetLabels: []

podTargetLabels: []

port: metrics

targetPort: ""

interval: ""

scrapeTimeout: ""

relabellings: []

relabelings: []

metricRelabelings: []

additionalLabels: {}

scheme: http

tlsConfig: {}

prometheusRules:

enabled: false

namespace: ""

additionalLabels: {}

alerts: []

opentelemetry:

collector:

uri: ""

operator:

enabled: false

certificates:

autoGenerated: true

secretName: kedaorg-certs

mountPath: /certs

certManager:

enabled: false

generateCA: true

caSecretName: "kedaorg-ca"

secretTemplate: {}

issuer:

generate: true

name: foo-org-ca

kind: ClusterIssuer

group: cert-manager.io

operator:

permissions:

metricServer:

restrict:

secret: false

operator:

restrict:

secret: false

namesAllowList: []

extraObjects: []

asciiArt: true

customManagedBy: ""

5、安装keda

[root@k8s-master01 keda]# helm install keda . -n keda

查看资源

#查看pod

[root@k8s-master01 keda]# kgp -n keda

NAME READY STATUS RESTARTS AGE

keda-admission-webhooks-9664c747b-rxk88 1/1 Running 0 46s

keda-operator-848cf7589c-swfzd 1/1 Running 1 (42s ago) 46s

keda-operator-metrics-apiserver-7bc7d5755b-zdkbm 1/1 Running 0 46s

#查看crd

[root@k8s-master01 ~]# kg crd | grep keda

cloudeventsources.eventing.keda.sh 2025-04-03T03:49:23Z

clustercloudeventsources.eventing.keda.sh 2025-04-03T03:49:23Z

clustertriggerauthentications.keda.sh 2025-04-03T03:49:23Z

scaledjobs.keda.sh 2025-04-03T03:49:23Z

scaledobjects.keda.sh 2025-04-03T03:49:23Z

triggerauthentications.keda.sh 2025-04-03T03:49:23Z

#查看api-resources

[root@k8s-master01 ~]# kubectl api-resources | grep keda

cloudeventsources eventing.keda.sh/v1alpha1 true CloudEventSource

clustercloudeventsources eventing.keda.sh/v1alpha1 false ClusterCloudEventSource

clustertriggerauthentications cta,clustertriggerauth keda.sh/v1alpha1 false ClusterTriggerAuthentication

scaledjobs sj keda.sh/v1alpha1 true ScaledJob

scaledobjects so keda.sh/v1alpha1 true ScaledObject

triggerauthentications ta,triggerauth keda.sh/v1alpha1 true TriggerAuthentication